AI Contextual Governance Framework: A Practical Guide

An AI contextual governance framework is becoming crucial as companies increasingly depend on AI to make real-world decisions. This approach is especially useful for organizations looking to build an AI governance framework that aligns with real business risk.

The experimental phase of AI has ended. It is used by businesses for workflow automation, fraud detection, loan approval, and product recommendations.

Only a small portion of companies have developed governance procedures in place, despite the fact that over 50% of them currently use AI in at least one business function. As adoption rises, this disparity keeps widening.

However, governance has not kept up , which is evident in real-world projects. Teams quickly build and deploy models, but governance is often overlooked. When someone asks about oversight, the model is already in production.

Think about this for a moment. One team we collaborated with had two models operating side by side. One had an influence on customer pricing, and the other was responsible for internal reporting. It’s interesting to note that both went through the same approval process. The gap between governance and actual business risk became apparent at that point.

In this case, an AI contextual governance framework can be very beneficial.

It modifies governance according to business context, risk level, and real-world impact rather than implementing the same controls everywhere. Each model is given the proper amount of attention in this way. Nothing superfluous, nothing neglected.

Why the Contextual Governance Framework for AI Is More Important Than Ever

AI is now widely used in business operations. Therefore, even small model errors can be harmful.

Take the fraud detection model of a banking system, for example. If it doesn’t work, customers could lose money, and the business could face fines from the government. Compare that to a model that predicts internal sales trends. It has a very different effect.

Many organizations still govern both models the same way. For precisely this reason, an AI contextual governance framework is required. It establishes a direct link between business impact and governance decisions.

Why Traditional AI Governance Frameworks Fall Short

Static Controls in Dynamic AI Environments

Fixed rules are the foundation of traditional governance frameworks. But AI systems are always evolving. Market conditions change, customer behavior changes, and data is updated. Even so, governance frequently remains unchanged. Consequently, a glaring mismatch emerges.

I recall an instance where a low-risk model had to go through several levels of review before it could be approved, which took weeks. Simultaneously, a more critical model advanced with fewer checks because the procedure was not intended to distinguish between the two.

Governance Detached from Business Context

Another common issue is the disconnect between corporate reality and governance. In many organizations, governance teams operate separately from business units. As a result, they may not fully understand how a model affects sales or clientele.

For instance, a pricing model that directly impacts customers should not be subject to the same level of oversight as an internal analytics tool. Without context, both could go through the same processes. This leads to either under-governance or over-governance.

Limited Feedback and Learning Loops

Validation is frequently the end of traditional governance. Teams deploy a model after it has been approved and proceed. But even after being deployed, AI systems are still evolving.

New information comes in. Patterns change. It is possible for performance to stray. It’s easy to overlook at first because it typically doesn’t appear immediately. Over time, a model that was flawless at launch may gradually become less accurate. Without adequate oversight, the problem remains undetected until it begins to impact results.

What Is an AI Contextual Governance Framework?

An AI contextual governance framework is a governance model that dynamically adjusts oversight, validation, monitoring, and documentation standards based on the business context and risk classification of each AI system.

In another word, an AI contextual governance framework is a governance approach that adjusts oversight based on the business context and risk level of each AI model.

Instead of asking:

“Has this model been validated?”

It asks:

“Is this model governed appropriately for its real-world impact?”

This shift makes governance more practical and effective.

Core Principles

At its core, this framework is built on a few key ideas.

- First, governance should be risk-based. Not all models carry the same level of risk, so they should not be treated the same way.

- Second, governance should align with business needs. It should reflect how AI is actually used, not just how it is built.

- Third, human oversight remains important. Even advanced AI systems require human judgment, especially in high-risk scenarios.

- Fourth, governance should evolve over time. As models change, governance processes should adapt.

- Finally, accountability must be clear. Every model should have defined ownership so responsibilities are never unclear.

How It Differs from Traditional Governance

In practice, an AI contextual governance framework starts with understanding how each model is used.

Organizations first evaluate the business function of a model. Then they assess its impact on customers, revenue, and compliance. Based on this, they assign a risk level.

For example, a model used for internal reporting may fall into a low-risk category. It requires only basic validation and minimal oversight. On the other hand, a model used for financial decision-making would be considered high risk. It would require detailed validation, strict monitoring, and human approval before deployment.

When teams start applying this approach, the shift is noticeable. Instead of asking “What’s the standard process?”, they begin asking “What makes sense for this model?” That small change often leads to better decisions.

Core Components of an AI Contextual Governance Framework

A strong AI contextual governance framework is built on a few key components. Each one ensures that governance aligns with business context and risk. Together, these elements form a strong AI oversight framework that ensures consistent and reliable governance.

1. Business Context Classification

Organizations should classify AI models based on where and how they are used.

This covers:

- Business functions, such as underwriting, claims processing, or marketing automation

- Financial impact

- Customer exposure

- Regulatory sensitivity

Based on these factors, models can be grouped into:

- Low risk

- Moderate risk

- High risk

This enables business-specific AI governance and ensures that oversight matches real-world impact.

2. Risk-Based Governance Controls

Governance controls should vary based on the assigned risk tier.

For example:

- Tier 1 models may require basic documentation and minimal review

- Tier 2 models need structured validation and oversight checkpoints

- Tier 3 models require audit-ready documentation and executive-level visibility

This risk-based AI governance approach aligns with enterprise AI risk management. It also ensures that resources are focused where they matter most.

3. Organizational Alignment and Accountability

Clear ownership is essential for effective governance.

Organizations should define:

- Model owners and validators

- Roles using a RACI framework

- Cross-functional governance committees

- Escalation paths for high-risk systems

This improves AI governance organizational alignment and ensures accountability across teams.

4. Human Validation and Oversight

Human involvement is critical for high-risk AI systems.

You’ll typically see:

- Human-in-the-loop review processes

- Ethical oversight where needed

- Pre-deployment sign-off

- Exception handling and override mechanisms

This is where AI governance human validation plays a key role in reducing risk.

5. Continuous Learning and Improvement Loop

Governance should evolve over time.

Organizations should:

- Monitor model performance continuously

- Detect and respond to model drift

- Feed insights back into governance policies

- Conduct governance maturity assessments every 6–12 months

This creates an AI governance learning loop and supports AI governance continuous improvement.

How an AI Contextual Governance Framework Works Across the AI Lifecycle

Governance should not be a one-time activity. Instead, it should be embedded across the entire AI lifecycle. This enables a more context-aware AI governance approach that adapts to changing business conditions.

Development Stage

During development, teams should begin with early classification of business context.

At a basic level, this allows:

- Identifying the model’s purpose

- Assigning a risk tier before validation begins

- Defining governance documentation requirements upfront

This allows expectations to be clear from the start. It also reduces delays later.

Validation Stage

In the validation stage, governance should follow a context-aware validation depth.

This means:

- Applying tiered review processes based on risk

- Conducting deeper validation for high-risk models

- Including ethical and fairness evaluations where required

While keeping validation efficient, this approach strengthens contextual model validation, ensuring that validation depth matches the model’s business impact and risk level.

Deployment Stage

Before deployment, a governance sign-off checkpoint should be completed.

At this stage:

- All approvals are finalized

- Documentation is reviewed

- Contextual monitoring triggers are activated

This creates a clear transition from development to production.

Post-Deployment Monitoring

After deployment, continuous monitoring becomes essential.

Teams should:

- Track model performance regularly

- Recalibrate risk if business context changes

- Conduct periodic revalidation for high-impact models

As a result, governance remains effective as models evolve over time.

Benefits of Implementing a Contextual AI Governance Framework

1. Reduced Regulatory Exposure

One of the most important benefits is reduced regulatory exposure. When governance aligns with regulatory sensitivity, organizations can focus more on high-risk models. This helps ensure compliance while avoiding unnecessary effort on low-risk systems.

2. Improved Operational Efficiency

Another key advantage is improved operational efficiency. Low-risk models no longer go through unnecessary bureaucratic steps. As a result, teams can move faster and spend more time improving models that actually matter. In many cases, this also reduces delays and internal friction.

3. Scalable Oversight

As AI adoption grows, governance must scale with it. A contextual AI governance framework provides scalable oversight that adapts as the AI portfolio expands. Instead of creating bottlenecks, it supports growth while maintaining control.

4. Stronger Executive Confidence

Clear governance structures improve visibility at the leadership level. Executives gain a better understanding of the organization’s AI oversight posture. This makes it easier to make informed decisions and manage risk effectively.

5. Enhanced Trust and Transparency

Finally, this framework strengthens trust and transparency. When stakeholders understand how governance decisions are made, they feel more confident in AI-driven outcomes. This is especially important in industries where AI directly impacts customers.

Common Challenges in Building a Contextual Governance Framework

While the benefits are clear, many organizations face practical challenges when implementing an AI contextual governance framework.

1. Lack of Centralized AI Inventory

One of the most common issues is the lack of a centralized AI inventory. Without a clear view of all models across the organization, it becomes difficult to apply consistent governance. Teams often miss models or apply inconsistent controls.

2. Resistance from Development Teams

Another challenge is resistance from development teams. Some teams see governance as a barrier that slows down innovation. However, when implemented correctly, contextual governance actually reduces unnecessary steps and improves clarity.

3. Overengineering Controls for Low-Risk Use Cases

Organizations sometimes apply strict governance controls across all models. This leads to overengineering, especially for low-risk use cases. As a result, teams waste time on unnecessary processes instead of focusing on high-impact systems.

4. Underestimating Regulatory Sensitivity

In some cases, organizations underestimate the regulatory sensitivity of certain models. This can lead to insufficient oversight for high-risk systems, increasing the likelihood of compliance issues or operational risk.

For example, real-world cases show how model inaccuracies can lead to serious consequences when governance is weak. You can explore one such case here: when accuracy fails high stakes in insurance ai.

5. Absence of a Supporting AI Governance Platform

Finally, many organizations lack a supporting AI governance platform. Without the right tools, it becomes difficult to manage workflows, track models, and maintain consistent governance practices at scale.

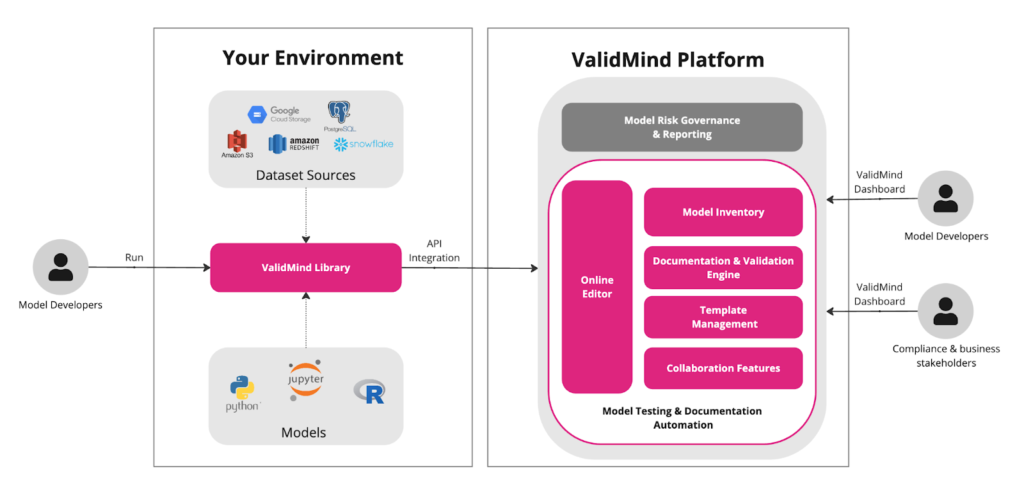

How an AI Governance Platform Enables Contextual Governance

A strong AI governance platform plays a key role in making contextual governance practical and scalable.

Centralized Model Inventory

An AI governance platform provides a centralized model inventory that offers a unified view of AI systems across business units. This makes it easier to track models, understand their purpose, and apply consistent governance controls.

Configurable Risk-Based Workflows

Modern platforms support configurable risk-based workflows. This allows organizations to automate tier-based validation processes based on risk levels. As a result, low-risk models move faster, while high-risk models receive deeper scrutiny.

Context-Aware Documentation Controls

Another important capability is context-aware documentation control. The platform stores and organizes validation artifacts based on risk levels. This ensures that documentation requirements align with governance needs without creating unnecessary overhead.

Continuous Monitoring and Governance Visibility

An AI governance platform also provides continuous monitoring and visibility.

Lifecycle dashboards give teams a clear view of model performance, risk status, and governance activity. This helps organizations respond quickly to changes and maintain control at scale.

In this way, an AI governance platform enables a contextual AI governance solution that aligns oversight with business risk and operational needs.

Step-by-Step Guide to Building an AI Contextual Governance Framework

Building an AI contextual governance framework requires a structured and practical approach. The following steps help organizations implement governance effectively.

1. Map and Classify AI Systems by Business Impact

Start by identifying all AI systems across the organization. Then classify them based on business impact, customer exposure, and regulatory sensitivity. This creates a clear foundation for contextual governance.

2. Define Risk Tiers and Governance Standards

Next, define risk tiers such as low, moderate, and high. For each tier, establish governance standards, including validation requirements, documentation levels, and oversight expectations.

3. Establish Ownership and Accountability Structures

Clearly define who is responsible for each model. This includes assigning ownership, setting accountability structures, and aligning teams through governance roles and responsibilities.

4. Implement Validation Templates and Monitoring Triggers

Standardize validation processes using templates. At the same time, set up monitoring triggers that track performance and alert teams when risks change or models drift.

5. Introduce Governance Performance Metrics

Measure how well governance is working. Define governance performance metrics such as validation timelines, model risk coverage, and compliance status.

6. Conduct Periodic Governance Maturity Reviews

Finally, review governance practices regularly. Conduct governance maturity model assessments every 6–12 months to identify gaps and improve processes over time.

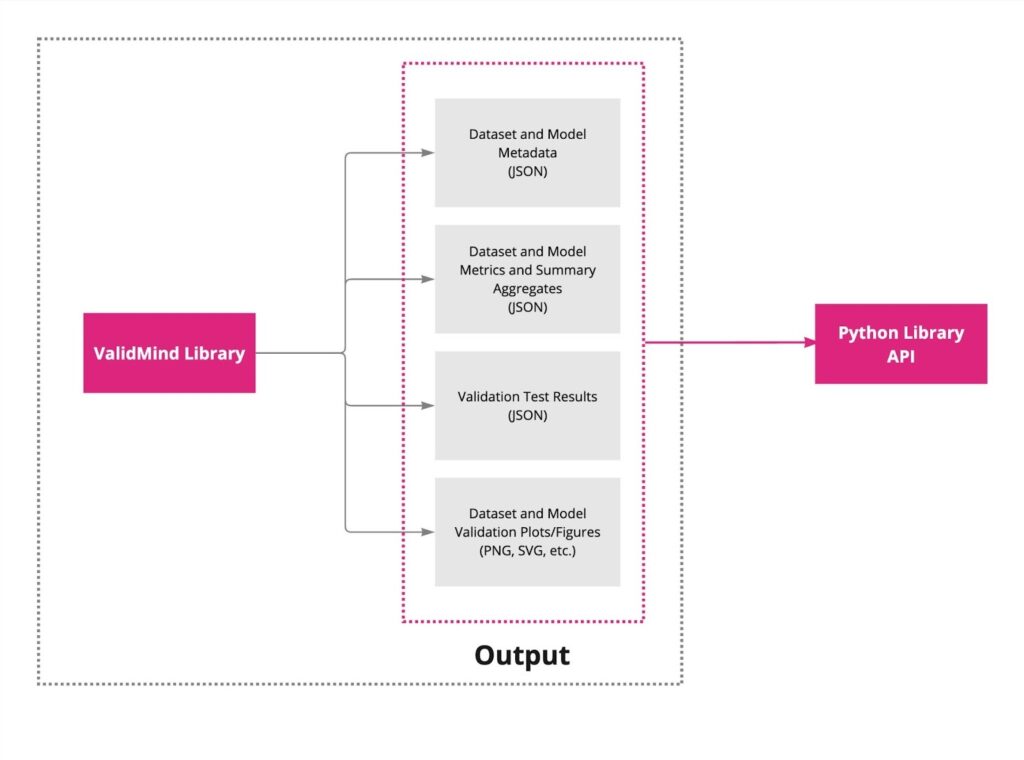

How ValidMind Enables an AI Contextual Governance Framework

ValidMind provides a comprehensive set of tools that help organizations implement an AI contextual governance framework at scale.

Core Platform Capabilities

1. Centralized AI Model Inventory

ValidMind offers a centralized AI model inventory that provides unified visibility across business units.

For example, this includes:

- A single source of truth for all AI models

- Context-based model classification

- Risk-tier tagging and categorization

2. Context-Aware Risk Classification

ValidMind enables context-aware risk classification by aligning models with real-world impact.

As a result, this ensures:

- Business-specific model segmentation

- Regulatory sensitivity mapping

- Financial and customer impact alignment

3. Risk-Based Governance Workflows

The platform supports risk-based governance workflows that adapt to model risk levels.

In practice, this approach ensures:

- Tiered validation processes

- Configurable approval checkpoints

- Role-based oversight controls

Governance and Oversight Features:

1. Human-in-the-Loop Governance Controls

ValidMind includes human-in-the-loop governance controls for high-risk models.

A few key elements are:

- Structured review and sign-off workflows

- Clear accountability assignment

- Exception handling and escalation mechanisms

2. Continuous Lifecycle Monitoring

The platform enables continuous lifecycle monitoring of AI systems.

This makes it easier,:

- Model drift tracking

- Performance-based governance triggers

- Ongoing contextual refinement

Compliance and Visibility

1. Audit-Ready Documentation and Evidence Management

ValidMind supports audit-ready documentation and evidence management.

This involves:

- Centralized validation artifacts

- Version control and audit trails

- Standardized documentation templates

2. Enterprise Governance Visibility

ValidMind provides enterprise-level governance visibility.

In practice, this approach ensures:

- Portfolio-level oversight dashboards

- Risk distribution insights

- Executive reporting capabilities

Conclusion

AI governance is shifting from static compliance to contextual oversight. Instead of applying the same rules everywhere, organizations are beginning to align governance intensity with real business context. This shift makes governance more practical and more effective.

When business context drives governance decisions, teams can focus their efforts where risk actually exists. Low-risk models move faster, while high-risk systems receive deeper scrutiny.

Enterprises that implement an AI contextual governance framework achieve scalable, risk-aligned AI oversight. This allows them to grow their AI initiatives without losing control or increasing exposure.

Over time, governance maturity model becomes more than just a compliance requirement. It turns into a competitive advantage, helping organizations move faster, operate with confidence, and build stronger trust in AI-driven decisions.

If you’re looking to strengthen your approach, you can explore how ValidMind supports contextual AI governance in practice. To see how it works in real scenarios, you can request a demo and see these capabilities in action.

AI Contextual Governance Framework FAQs

1. What is an AI contextual governance framework?

An AI contextual governance framework is a governance model that adjusts oversight, validation, and monitoring requirements based on the specific business context and risk level of each AI system.

2. How does contextual AI governance differ from traditional AI governance?

Traditional AI governance applies uniform controls across models, while contextual governance tailors oversight intensity according to business impact, regulatory sensitivity, and operational risk.

3. Why is business context important in AI governance?

Business context determines how much risk a model carries. High-impact or customer-facing models require stricter oversight than low-risk internal automation tools.

4. What are the core components of contextual AI governance?

Key components include risk-based classification, organizational accountability, AI governance human validation checkpoints, lifecycle monitoring, and continuous governance improvement loops.

5. How does contextual governance reduce regulatory risk?

By aligning governance intensity with regulatory exposure, contextual frameworks ensure high-risk models receive audit-ready documentation and stronger validation controls.

6. Can contextual governance improve operational efficiency?

Yes. Low-risk models avoid unnecessary documentation and review overhead, while high-risk models receive proportionate oversight.

7. What role does human validation play in contextual governance?

Human validation ensures critical AI decisions are reviewed before deployment, especially in high-impact or regulated environments.

8. How do enterprises classify AI models in contextual governance?

Models are categorized based on business function, customer impact, financial exposure, and regulatory sensitivity.

9. Is an AI governance platform necessary for contextual governance?

While not mandatory, a centralized AI governance platform significantly improves visibility, documentation control, and risk-based workflow automation.

10. How often should an AI contextual governance framework be reviewed?

Governance frameworks should be reviewed periodically, typically every 6-12 months, or whenever significant business or regulatory changes occur.