Agentic AI Governance Frameworks: Controlling Autonomous AI Systems

For the past decade, enterprise AI governance has been built around a relatively predictable problem: how to validate, monitor, and audit predictive models that score, classify, or forecast. The model received an input, produced an output, and a human decided what to do next. Governance was an exercise in oversight at the boundaries: pre-deployment validation, periodic performance reviews, and post-hoc audits.

Agentic AI breaks that model entirely.

Agentic AI systems do not stop at predictions. They plan, decide, execute, call tools, invoke APIs, and adapt as they learn from the environment. They take actions in production systems, moving money, updating customer records, triggering downstream workflows, and even spawning sub-agents, often without a human in the immediate loop.

This shift turns AI governance from a periodic checkpoint into a real-time control problem. Static policies, quarterly model reviews, and PDF-based attestations cannot keep pace with systems that make thousands of consequential decisions per day. Traditional AI governance frameworks such as NIST AI RMF, SR 26-2, ISO/IEC 42001, and most internal model risk management programs were designed for a world of static models in controlled environments. That world is fading.

The organizations leading enterprise AI today are building agentic AI governance frameworks that look fundamentally different: continuous instead of periodic, behavior-focused instead of model-focused, automated instead of paper-based, and integrated end-to-end instead of bolted on at the end. This guide explains what those frameworks must contain, where existing frameworks fall short, and how to operationalize agentic governance at enterprise scale.

What Makes Agentic AI Governance Fundamentally Different

Agentic AI governance is not just AI governance with more steps. It is a different discipline because the underlying systems behave differently.

From Static Models to Autonomous Systems

Traditional AI produces predictions: a credit score, a churn probability, a fraud flag. Humans interpret those predictions and decide what to do.

Agentic AI takes actions. An agent that detects a suspicious transaction may pause the account, notify the customer, file a SAR draft, and update the case management system in one autonomous chain. The unit of governance is no longer the model output. It is the system behavior: what the agent did, why it did it, what consequences followed, and whether any of those consequences violated policy or risk appetite.

Governance Must Address Behavior, Not Just Models

Model-Centric Governance (Old)

Model-centric governance asks questions like: Is the model accurate? Is it fair? Is it stable? These remain important, but they are insufficient. A perfectly accurate model embedded in an agent that takes the wrong action, or the right action in the wrong context, is still a governance failure.

Behavior-Centric Governance (New)

Behavior-centric governance asks: What did the system decide? Was the decision within sanctioned boundaries? What downstream actions followed? Who is accountable? This is closer to how operational risk and conduct risk are managed in financial services, and it is the foundation of any credible agentic AI governance framework.

Continuous Decision-Making Requires Continuous Control

Agentic systems make decisions dynamically, in context, often using information that did not exist when the system was last reviewed. A governance framework that depends on quarterly attestations cannot meaningfully constrain a system making 50,000 decisions a day. Controls must operate at runtime, observing, logging, validating, and where necessary, intercepting decisions in real time.

Traditional vs. Agentic AI Governance

| Dimension | Traditional Governance | Agentic AI Governance |

| Focus | Model performance | System behavior |

| Control | Pre-deployment | Continuous |

| Risk | Predictable | Dynamic |

| Monitoring | Periodic | Real-time |

| Accountability | Limited | End-to-end |

Insight: Agentic AI governance must evolve into a continuous control system, not just a compliance layer.

Why Existing AI Governance Frameworks Fall Short

Most enterprise AI governance programs today are built on top of frameworks like the NIST AI Risk Management Framework, the Federal Reserve’s SR 11-7 guidance, OSFI’s E-23, or ISO/IEC 42001. These frameworks codify good practice, but they assume stable systems and bounded outputs. Agentic AI breaks both assumptions.

Static Policies Cannot Govern Dynamic Behavior

Policies in traditional frameworks are largely predefined and enforced through approval gates. An agentic AI system, by contrast, adapts in real time by choosing tools, planning multi-step actions, and sometimes self-modifying through learning. A policy written last quarter may not anticipate the action the agent takes this afternoon.

Lack of Enforcement Mechanisms

Existing frameworks tell organizations what should be done, such as documenting the model, validating the model, and monitoring the model, but they do not specify how to enforce these requirements technically. Most enterprise governance still depends on humans completing checklists. That is unsustainable when AI systems are themselves the actors.

No Real-Time Visibility into Decisions

Periodic monitoring is built into most frameworks. Real-time monitoring of agent decisions, tool calls, and downstream consequences typically is not. Without live decision tracking, organizations cannot reconstruct what the system did, why, or whether it stayed inside its sanctioned boundary, which makes meaningful AI system accountability nearly impossible.

Framework Gaps in the Agentic AI Context

| Framework Capability | Current State | Gap in Agentic AI |

| Policy Definition | Strong | Static |

| Risk Assessment | Moderate | Not continuous |

| Monitoring | Limited | Not real-time |

| Enforcement | Weak | Not automated |

| Accountability | Partial | Incomplete |

Insight: Frameworks must evolve from guidelines into executable governance systems.

Core Components of Agentic AI Governance Frameworks

A modern agentic AI governance framework needs four components working in concert: control, visibility, enforcement, and orchestration. Each addresses a specific failure mode of static governance.

Decision Boundaries and Control Layers

What Are Decision Boundaries

Decision boundaries define the space within which an agent is permitted to act autonomously, and where it must escalate, pause, or refuse. They are the operational expression of the organization’s risk appetite. A wealth-management agent might be allowed to rebalance within tolerance bands but must escalate any trade above a certain notional. A customer-service agent might be empowered to issue refunds up to a defined threshold and below a defined frequency.

Control Mechanisms

Decision boundary controls typically include rule-based constraints (the agent cannot take action X under condition Y), approval gates (certain actions require human sign-off), system limits (rate, scope, and tool-access limits), and policy circuit breakers (if anomalous behavior is detected, the agent is paused). Together, these form the AI governance architecture that turns abstract policy into runtime enforcement.

Human-in-the-Loop and Override Systems

When Human Intervention Is Required

Not every decision should be autonomous. Frameworks should specify the categories of decisions that always require human review: high-risk financial transactions, decisions affecting protected classes, novel situations the agent has not encountered before, and any action triggered by an anomaly signal or regulatory flag.

Override Capabilities

Operators must retain the ability to pause individual agents, reverse in-flight actions where technically possible, escalate decisions to higher authority, and shut down agent populations entirely under defined conditions. These are not optional. They are the fail-safe layer that makes autonomous AI governance trustworthy.

Continuous Monitoring and Feedback Loops

Monitoring Dimensions

Effective monitoring spans three dimensions simultaneously: performance (is the agent producing accurate, useful outputs?), behavior (is it acting within sanctioned boundaries?), and decision patterns (are there drifts, clusters, or emerging risks visible only at the population level?). Monitoring narrowly on accuracy alone misses the most important governance signals.

Feedback Systems

Agentic systems learn. Governance must learn with them. Feedback loops feed monitoring observations back into policy updates, control tuning, and re-validation triggers, closing the loop between observed behavior and the governance lifecycle orchestration that surrounds it.

Governance Workflow Automation

Workflow Components

Validation, approval, monitoring, change management, and audit reporting must be implemented as automated workflows, not manual processes. Each agentic system should move through a defined lifecycle (intake, risk tiering, validation, approval, deployment, monitoring, recertification, and retirement), with the relevant artifacts produced and stored automatically.

Benefits

Workflow automation delivers three things humans cannot: consistency (the same controls applied every time), scalability (governing hundreds or thousands of agents without proportional headcount), and auditability (every step recorded, timestamped, and traceable). This is what separates true AI governance platforms from spreadsheet-based programs.

Designing Enterprise-Ready Agentic AI Governance Frameworks

A framework that works for one agent in one business unit is not yet enterprise AI governance. Designing for the enterprise means designing for scale, integration, and standardization from day one.

Scalability Across AI Systems

Most large enterprises are headed toward portfolios containing hundreds or thousands of models and agents, spread across customer service, fraud, marketing, operations, finance, and risk. Governance must work across that entire portfolio without bottlenecking the model risk or compliance functions. A scalable AI governance strategy treats governance as a horizontal capability, not a per-project effort.

Integration with Risk and Compliance Systems

Agentic AI governance does not exist in isolation. It must integrate with enterprise risk management, model risk management, compliance reporting, internal audit, and regulator-facing reporting. When an agent’s risk profile changes, the relevant teams need to know automatically, not via email.

Standardization of Governance Processes

Standardization is what makes governance auditable and defensible: the same intake form, the same risk tiering, the same validation expectations, and the same documentation templates, all applied consistently. This is a core function of mature AI governance models and one of the highest-leverage investments an organization can make.

Enterprise Governance Framework Requirements

| Requirement | Description | Impact |

| Scalability | Handle multiple AI systems | Enterprise readiness |

| Standardization | Uniform governance processes | Efficiency |

| Integration | Connect with risk/compliance systems | Alignment |

| Automation | Reduce manual effort | Speed and accuracy |

| Visibility | Centralized insights | Better decisions |

Insight: Enterprise frameworks must be operational, not theoretical.

Governance Challenges in Agentic AI Systems

Even well-designed frameworks face real challenges in agentic environments. Three deserve special attention.

Lack of Transparency in Autonomous Decisions

Agentic systems frequently combine multiple models, retrieval components, tool calls, and chained reasoning steps. Reconstructing exactly why an agent took a particular action, at the granularity a regulator would accept, is genuinely hard. Frameworks must require structured decision logging at every step, not narrative summaries after the fact.

Difficulty in Auditing Autonomous Systems

Audit traditionally relies on stable artifacts: a model file, a validation report, a sign-off email. Agentic systems produce a continuous stream of decisions, each potentially shaped by context that no longer exists. Audit trails must capture not just decisions but the inputs, tool calls, sub-agent invocations, and policy state that surrounded them.

Managing Cross-System Dependencies

In production, agents rarely operate alone. They call other agents, share data, and trigger workflows that involve traditional systems. A change in one agent can cascade through five others. Modern model oversight frameworks must reason about agent populations and their dependencies, not just individual systems in isolation.

From Frameworks to Execution: Bridging the Gap

Most governance frameworks fail not in design but in execution. The policy document is approved, the steering committee is constituted, the principles are communicated, and then nothing operationally changes. For agentic AI, that gap is not a nuisance. It is a material risk.

Need for an Execution Layer

A framework defines what governance should look like. An execution layer translates that into workflows, system controls, automated checks, and runtime enforcement. Without this layer, governance policy automation is a slide in a deck rather than a control in production.

Enforcing Governance Across the Lifecycle

Governance must follow the agent across its entire lifecycle, from initial design through development, validation, approval, deployment, monitoring, change management, and retirement. Each stage needs defined entry and exit criteria, mandatory artifacts, and automated handoffs. This is the operational meaning of governance lifecycle orchestration.

Ensuring Audit Readiness

Audit readiness is not a project that begins three weeks before a regulatory exam. It is a property of the governance system itself. Documentation, decision logs, validation evidence, and approval trails should be continuously generated and continuously available. When regulatory AI governance demands evidence, whether for the EU AI Act, for OSFI E-23, or for SR 11-7, that evidence should already exist.

Framework vs. Execution Gap

| Area | Framework Defines | Execution Requires |

| Policies | Rules | Enforcement systems |

| Validation | Standards | Automated workflows |

| Monitoring | Guidelines | Real-time tracking |

| Compliance | Requirements | Audit-ready outputs |

Insight: Without execution, governance frameworks remain theoretical.

How ValidMind Enables Agentic AI Governance Frameworks

ValidMind was built to close exactly this gap between the framework on paper and the controls in production. For organizations operationalizing agentic AI, ValidMind provides four capabilities that turn governance principles into running systems.

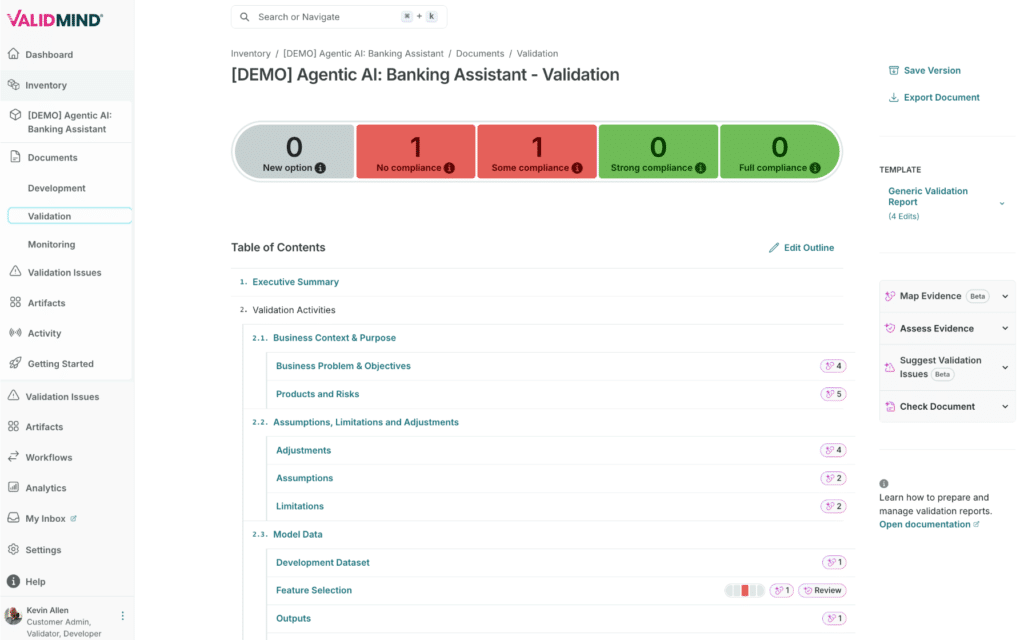

Structured Governance Workflows

ValidMind delivers standardized, configurable workflows for validation, approval, change management, and recertification. Each model and agent moves through a defined lifecycle with clear ownership, automated handoffs, and consistent evidence capture, replacing email threads, spreadsheets, and ad hoc review meetings with a single system of record.

Lifecycle Orchestration

End-to-end orchestration means governance follows the system from concept through retirement. Risk tiering at intake drives the depth of validation. Validation outputs drive approval routing. Approval drives deployment readiness. Monitoring outputs drive recertification triggers. Every stage is connected, every transition is recorded, and the entire chain is queryable.

Audit-Ready Documentation

ValidMind automates the production of validation reports, model risk documentation, and regulatory artifacts so they are generated as a byproduct of work, not as a separate effort. When auditors or regulators arrive, the evidence is already assembled, version-controlled, and traceable to the underlying tests, data, and approvals.

Centralized Model Oversight

A unified model and agent inventory gives risk, compliance, and the lines of business a single view of every AI system in production: its tier, its owner, its current state, its open issues, and its compliance posture. This centralized oversight is the foundation of enterprise AI governance that scales beyond pilots.

Further Reading from ValidMind

For practitioners building agentic AI programs in regulated industries, ValidMind publishes additional guidance on related governance challenges. Explore our deeper analyses of agentic AI in financial services governance,why agentic AI demands new governance approaches, and enterprise AI governance at scale. For regulator-specific implementation, our analysis of OSFI E-23 walks through obligations now applicable to AI and agentic systems. Teams operationalizing the platform can also explore ourAI governance use case documentation, the ValidMind AI governance platform overview, and our complete ValidMind training overview.

Conclusion

Agentic AI introduces autonomy, complexity, and continuous risk into the enterprise. The governance approaches that worked for static predictive models, such as periodic validation, paper attestations, and point-in-time approvals, cannot keep pace with systems that decide and act thousands of times per day.

The future of AI governance is continuous, automated, and system-driven. It depends on decision boundaries that operate in real time, monitoring that watches behavior rather than just performance, and execution layers that enforce policy at runtime instead of in retrospect.

Agentic AI governance frameworks must evolve into executable, real-time control systems, and the organizations that build them now will be the ones that scale agentic AI safely while their competitors are still writing policies.

Agentic AI Governance Frameworks FAQs

How do agentic AI governance frameworks enforce decision boundaries in autonomous systems?

Effective frameworks enforce decision boundaries through layered runtime controls: rule-based constraints that hard-block prohibited actions, approval gates that route high-risk decisions to humans, scope and rate limits on tool access, and policy circuit breakers that pause agents when anomaly signals fire. The boundaries themselves are derived from the organization’s risk appetite and codified into machine-enforceable policies, not narrative documents.

What architectural components are required to govern agentic AI behavior in real time?

A real-time governance architecture requires at minimum: a policy engine that evaluates decisions against current rules, a decision logger that captures inputs, reasoning, and tool calls, a monitoring layer that tracks behavior and anomalies, an intervention layer that can pause or override agents, and an orchestration layer that connects governance to the broader risk and compliance stack.

How can governance frameworks ensure accountability in multi-agent AI systems?

Accountability in multi-agent systems requires per-agent identity, structured logging of every cross-agent invocation, and clear ownership assignment at the system level. Frameworks should specify which human or team owns each agent, who is accountable for emergent behavior across an agent population, and how cascading actions are traced back to their originating decision.

What mechanisms are used to monitor and control cascading actions in agentic AI workflows?

Cascading actions are controlled through dependency mapping (knowing which systems and agents an action will affect), action budgets (limits on the cumulative impact of a chain of decisions), staged execution (high-impact actions complete only after lower-impact precursors validate), and rollback capabilities where state can be restored. Monitoring sits across the chain, not just at individual steps.

How do agentic AI governance frameworks integrate with enterprise risk management systems?

Integration happens at the data layer and the workflow layer. AI governance feeds risk events, control breaches, and validation outcomes into enterprise risk registers in near-real time. Conversely, enterprise risk taxonomies, risk appetite statements, and control libraries flow into AI governance to ensure consistent classification. Mature programs also integrate with internal audit and regulatory reporting pipelines.

What role do feedback loops play in maintaining governance control over adaptive AI systems?

Feedback loops convert monitoring observations into governance actions. When monitoring detects drift, anomalous behavior, or outcomes that fall outside expected ranges, feedback loops trigger re-validation, policy updates, control tuning, or escalation to human reviewers. Without active feedback loops, governance becomes stale within weeks of deployment.

How can organizations audit decision-making processes in agentic AI environments?

Auditing autonomous decisions requires structured logging at every reasoning and action step, capturing inputs, retrieved context, tool calls, sub-agent invocations, policy state, and final action in a tamper-evident store. Audits then reconstruct decisions deterministically from these logs. Narrative explanations alone are insufficient for regulatory audit; the underlying telemetry must support the explanation.

What are the key differences between model-level governance and system-level governance in agentic AI?

Model-level governance focuses on the properties of individual models: accuracy, fairness, stability, drift. System-level governance focuses on the behavior of the agent as a whole: which decisions it makes, which actions it executes, how it interacts with other systems, and what consequences follow. Agentic AI requires both, but system-level governance is where most existing programs are weakest.

How do governance frameworks handle dynamic policy enforcement in continuously learning AI systems?

Frameworks treat policy as code, not paper. Policies are versioned, tested, and deployed through a controlled process. When an agent’s behavior or environment changes, policies can be updated and pushed to the runtime enforcement layer without taking the system offline. Continuously learning systems also trigger automatic re-validation cycles to confirm policies still bind effective behavior.

What controls are necessary to manage cross-system dependencies in agentic AI architectures?

Cross-system dependencies require dependency inventories that map every upstream and downstream connection, change-impact analysis when any agent in the population is modified, isolation patterns that limit blast radius, and population-level monitoring that watches for emergent behavior visible only across multiple agents. Single-system controls are not sufficient when agents call other agents.