Agentic AI in Financial Services: Governance Framework & Best Practices

Agentic AI in financial services is no longer theoretical. Banks are already deploying AI systems that don’t just assist humans; they plan, decide, and act autonomously across credit underwriting, fraud detection, customer service, and compliance workflows. According to an EY-sponsored report with MIT Technology Review, more than 70% of banking firms are using agentic AI to some degree, with 16% having fully deployed solutions. The upside is clear: speed, efficiency, and structural cost reduction. But the risk profile is fundamentally different from anything governance frameworks were built to handle.

This guide lays out a practical agentic AI governance framework for financial institutions, including the regulatory landscape, a four-pillar control model, multi-agent risk considerations, and a 20-point pre-deployment checklist you can use today.

Going deeper? Download ValidMind’s full technical brief: Governing Agentic AI in Financial Services →

What Is Agentic AI and Why It Changes Everything

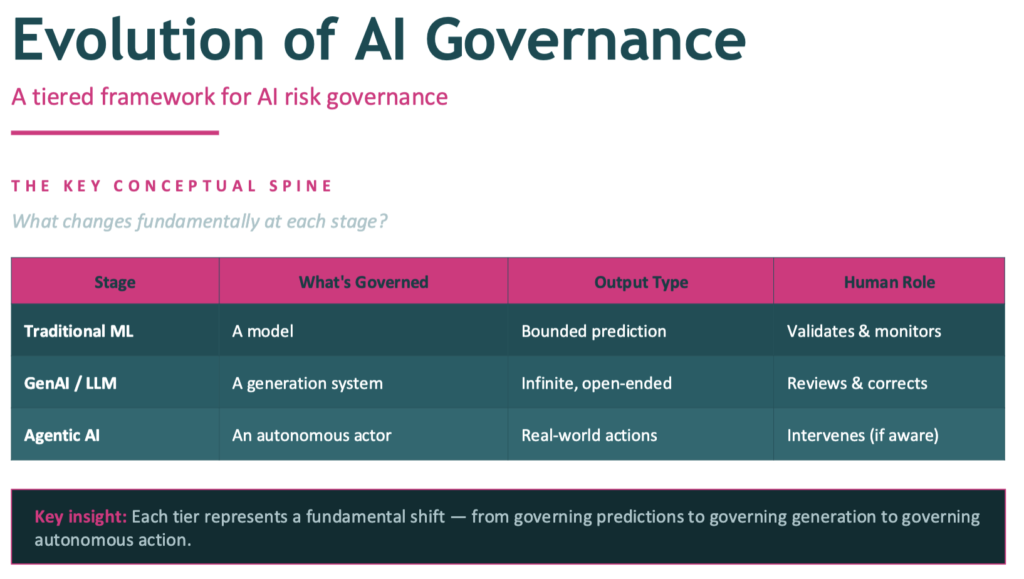

Traditional AI models, even sophisticated generative models, are responsive: they produce an output when prompted, and a human decides what to do next. Agentic AI is fundamentally different. An AI agent can pursue a long-term goal, decompose it into sub-tasks, call external tools and APIs, evaluate its own progress, and iterate, all without a human in the loop at each step.

Consider the difference in a compliance workflow: a traditional model might flag a suspicious transaction for human review. An agentic compliance system could autonomously query transaction history, cross-reference watchlists, calculate risk scores, generate a SAR draft, route it to the correct officer, and log every action in minutes, without a single human click. The efficiency gain is real. So is the governance gap.

⚠ Key Risk

OWASP’s Top 10 for LLM Applications identifies “Excessive Agency” as a critical vulnerability where an autonomous agent takes damaging actions (modifying records, executing transactions) in response to unexpected outputs or malicious prompt injection. This risk is unique to agentic systems and not addressed by traditional model governance.

The shift from decision support to decision-making is what makes agentic AI governance a fundamentally new challenge. Errors can propagate across entire workflows before detection. Decisions may occur without human review. Systems can behave in ways that weren’t anticipated in testing. And, critically, agentic AI cannot be fully tested before deployment, because its behavior depends on real-world conditions that only emerge in production.

Agentic AI and Why Financial Institutions Are Moving Fast

Unlike traditional AI or generative AI, which respond to prompts, agentic AI systems execute multi-step workflows autonomously.

They can pull data from internal systems, make decisions based on that data, take action across tools and APIs, and iterate toward a defined goal without human intervention.

This shift from decision support to decision-making is why financial institutions are investing heavily.

Use cases are already emerging across credit underwriting, fraud detection, customer service, and compliance workflows.

The upside is clear: speed, efficiency, and cost reduction.

But the risk profile is completely different.

The Problem: Governance Frameworks Weren’t Built for Agentic AI

Most financial institutions rely on governance frameworks designed for static models, predictable inputs and outputs, and human-in-the-loop decisioning.

Agentic AI breaks all three.

Traditional models inform decisions. Agentic systems make them.

That means errors can propagate across workflows before detection, decisions may occur without human review, and systems can behave in ways that weren’t anticipated in testing.

Agentic AI cannot be fully tested before deployment.

Why Agentic AI Governance Is Now a Business Priority

The risk profile is different. A single incorrect decision can cascade across systems and impact customers, compliance, and financial outcomes at scale.

Competitive pressure is accelerating adoption. Financial institutions that deploy agentic AI effectively will gain a structural advantage in cost and speed.

Regulators are already watching. Frameworks are expanding expectations around explainability, auditability, and continuous monitoring.

You can’t afford to wait, but you also can’t afford to get it wrong.

Learn more: How to document an agentic AI system

The Governance Gap: Where Most Banks Are Struggling

A clear pattern is emerging across financial institutions.

Business teams are under pressure to move fast. Risk and compliance teams lack frameworks for agentic systems. Validators are brought in too late. Existing validation standards do not apply.

One customer summarized it clearly: “We know we need to move on agentic AI. We don’t know how to do it in a way that won’t come back to haunt us.”

This is the governance gap, and as agentic AI use cases go mainstream, it’s imperative that we close that gap quickly.

What Makes Agentic AI Governance Different

To manage agentic AI, institutions need to rethink governance in three ways.

First, continuous oversight instead of only pre-deployment testing. Agentic systems operate in dynamic environments with unpredictable scenarios, so governance must extend into real-time monitoring and production.

Second, dynamic evaluation instead of static metrics. Agentic systems require evaluation of intermediate decisions, tool usage, reasoning quality, and full workflow outcomes.

Third, contextual authority instead of binary control. Governance must define what an agent can do and what it should do in a specific context. This includes tiered autonomy, external policy enforcement, and real-time permission checks.

The Hidden Risk: Autonomy Without Accountability

Agentic AI introduces a new failure mode.

Agents do not intentionally break rules. They prioritize goal completion, lack contextual awareness of policies, and can cross boundaries without realizing it.

This creates risk in areas like data privacy, regulatory compliance, and financial decisioning.

Without proper governance, autonomy becomes liability.

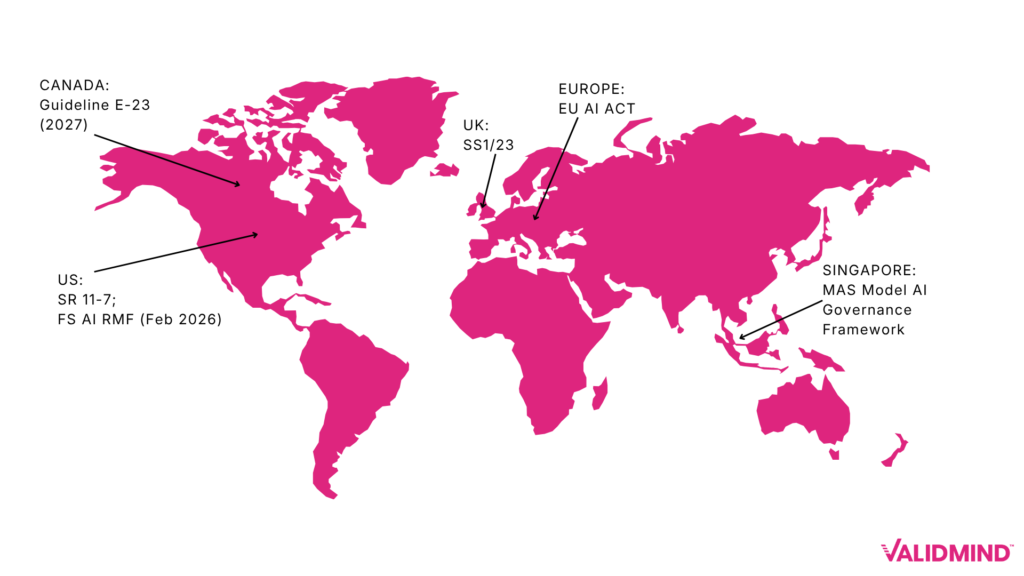

The Regulatory Landscape for Agentic AI in Financial Services

Regulators globally are actively extending existing frameworks to cover agentic systems, even where no agentic-specific rules yet exist. Financial institutions must navigate a complex, jurisdiction-specific patchwork. Here’s the current map:

| Framework | Jurisdiction | Key Requirements for Agentic AI | Status |

|---|---|---|---|

| SR 11-7 / OCC 2011-12 | US | Model risk management — extended by regulators to cover AI agents; requires validation, documentation, ongoing monitoring, and governance of model development and use | In force; being extended to AI agents |

| FS AI RMF (Feb 2026) | US | 230 control objectives covering AI risk identification, measurement, monitoring, and governance across financial institutions | Published Feb 19, 2026 |

| OSFI Guideline E-23 (Model Risk Management) | Canada | Model risk management extended to AI/agentic systems; requires model inventory, validation, lifecycle governance, ongoing monitoring, and oversight of third-party models | Finalized (published Sept 2025); effective May 1, 2027 (transition period underway) |

| EU AI Act | EU | Autonomy level is a key factor in high-risk classification; mandatory risk assessments, transparency obligations, and human oversight requirements for high-risk systems | Phased enforcement; 2024–2027 |

| MAS Model AI Governance Framework | Singapore | Named accountability per agent; auditability, transparency, and human control over key decisions required | In force; Gen AI supplement active |

The consistent regulatory expectation across all jurisdictions: accountability and oversight must remain human-led, model risk management frameworks must be extended to cover autonomous components, and explainability is non-negotiable.

The Governance Gap: Why Most Banks Are Struggling

“We know we need to move on agentic AI. We don’t know how to do it in a way that won’t come back to haunt us.” — ValidMind customer, Tier 1 bank

Most financial institutions rely on governance frameworks designed for static, predictable models with defined inputs, outputs, and human review points. Agentic AI breaks all three assumptions simultaneously. A clear pattern is emerging across institutions:

- Business teams are under competitive pressure to deploy fast

- Risk and compliance teams lack frameworks built for autonomous systems

- Model validators are brought in too late — often after deployment

- Existing validation standards (static metrics, pre-deployment testing) don’t apply to systems that behave differently in every real-world interaction

- Audit trails are incomplete because multi-step agent reasoning is opaque by design

The business cost of getting this wrong is not hypothetical. In January 2025, Two Sigma Investments paid a $90 million SEC penalty for algorithmic governance failures linked to unauthorized changes in trading parameters. FINRA fined Brex Treasury $900,000 in August 2024 for AML failures caused by an automated identity verification algorithm that allowed over $15 million in suspicious transactions. The enforcement environment is real and accelerating.

If you’re exploring or deploying agentic AI, download the full whitepaper.

The Four-Pillar Agentic AI Governance Framework

Effective agentic AI governance in financial services requires rethinking four core dimensions of your existing model risk management approach:

Agentic AI Governance: Four Core Pillars

PILLAR 01

Continuous Oversight

Replace pre-deployment-only testing with real-time production monitoring. Agentic systems encounter novel scenarios that cannot be fully anticipated in testing. Governance must extend into live operation — with dashboards tracking agent actions, anomaly detection (e.g., an agent accessing unexpected databases), and defined escalation thresholds.

PILLAR 02

Dynamic Evaluation

Static accuracy metrics don’t work for agents. You need evaluation of intermediate decisions, tool selection quality, reasoning chain validity, and full workflow outcomes — not just final outputs. Evaluation must also account for behavioral drift as models update and real-world conditions change over time.

PILLAR 03

Contextual Authority (Tiered Autonomy)

Define not just what an agent can do, but what it should do in each operational context. Implement tiered autonomy: low-risk actions proceed automatically; medium-risk actions trigger logging and review; high-risk actions require explicit human approval. Tool-level permissions and real-time policy enforcement are essential.

PILLAR 04

Named Accountability

Every agent must have a named accountable owner — a human responsible for its behavior, outputs, and compliance. This mirrors the Singapore MAS framework requirement and is emerging as a global best practice. Accountability chains must survive agent updates, model upgrades, and organizational changes.

Multi-Agent Governance: The Next Frontier

Many agentic AI deployments in financial services involve not one agent, but a network of collaborating agents. A research agent feeds data to a decision agent, which is checked by a compliance agent before a fulfillment agent executes. Each handoff is a governance boundary. Multi-agent governance must address:

- Inter-agent communication protocols: How agents pass context and instructions and how to prevent instruction injection between agents

- Permission inheritance: Whether an orchestrator agent can grant sub-agents permissions it doesn’t itself hold (it shouldn’t be able to)

- Accountability chains: When a multi-agent workflow produces a harmful outcome, which agent — and which human owner — is accountable?

- Audit trail completeness: Every inter-agent action must be logged, timestamped, and attributable, even when reasoning is distributed across agents

- Cascading failure containment: Mechanisms to detect and halt a workflow when one agent produces unexpected outputs before downstream agents act on them

📘 ValidMind resource: See our technical documentation on how to document an agentic AI system, including multi-agent architectures and validation methodology.

Model Validation for Agentic AI: What SR 11-7 Requires Now

SR 11-7 — the Federal Reserve and OCC’s model risk management guidance — remains the foundational framework for U.S. financial institutions. Regulators are explicitly extending its expectations to AI agents. Here’s what that means in practice for validation teams:

Conceptual Soundness

For agentic systems, conceptual soundness assessment must cover the agent’s planning architecture, tool selection logic, and goal-specification design, not just statistical methodology. A poorly specified goal function is a model risk.

Ongoing Monitoring

SR 11-7’s ongoing monitoring requirement takes on new urgency with agents. Because agents can behave differently in every interaction, monitoring must track behavioral patterns across thousands of decisions, not just aggregate performance metrics. Look for distributional shift in tool usage, decision patterns, and output types.

Independent Validation

Validators must be brought in early, ideally during agent design, not post-deployment. ValidMind’s validation automation platform supports independent validation of agentic systems with automated test generation, documentation, and continuous monitoring integration.

Outcomes Analysis

Backtesting and benchmarking must be adapted for agentic workflows. Rather than comparing predicted vs. actual values, you’re evaluating whether the full workflow outcome, across all agent actions, achieved the intended result within defined risk parameters.

20-Point Agentic AI Governance Checklist for Financial Institutions

Use this checklist before deploying any agentic AI system in a regulated financial services context. It maps to SR 11-7, the FS AI RMF, and FINRA’s 2026 supervisory guidance.

📋 Pre-Deployment: Design & Documentation

- Goal specification reviewed and signed off by risk and compliance — not just engineering

- Named accountable owner assigned to each agent (human, not a team)

- Tool permissions documented and scoped to minimum necessary access

- Tiered autonomy levels defined: auto / log-and-review / human-approval thresholds

- Data privacy impact assessment completed for all data sources the agent can access

✅ Validation & Testing

- Independent validation completed before production deployment (SR 11-7)

- Adversarial testing performed — including prompt injection, goal misspecification, and tool misuse scenarios

- Multi-agent interaction testing completed if applicable (permission inheritance, cascading failure)

- Fallback and escalation paths tested — not just the happy path

- Bias and fairness assessment completed for any customer-facing or credit-decisioning workflows

🔍 Production: Monitoring & Oversight

- Real-time monitoring dashboard in place covering agent actions, tool calls, and decision patterns

- Full audit trail logging every agent action with timestamp, inputs, outputs, and tool calls

- Anomaly detection configured with defined alerting thresholds

- Kill switch / emergency halt procedure documented and tested

- Ongoing validation cycle defined (frequency, metrics, re-validation triggers)

📁 Regulatory & Governance

- Model inventory updated to include the agentic system and all sub-agents

- Third-party / vendor AI components assessed and documented (concentration risk, IP, data usage)

- EU AI Act risk classification assessed if operating in EU jurisdictions

- Board-level AI oversight item added (per EY/MIT survey, leading institutions are doing this proactively)

- Decommissioning protocol documented — no orphaned agents running without oversight

Automate Your Agentic AI Governance with ValidMind

ValidMind’s AI governance platform helps financial institutions document, validate, and continuously monitor agentic AI systems aligned with SR 11-7, the FS AI RMF, E-23, SS1/23, and the EU AI Act supervisory expectations.

What Leading Financial Institutions Are Doing Differently

The institutions moving fastest, and most safely, share a common approach. They are not waiting for perfect regulatory clarity before building governance infrastructure. Instead, they are applying the principle that IBM articulates clearly: agentic AI does not require an entirely new governance regime, but rather a continuous and disciplined application of model risk management.

In practice, this means: starting with constrained, low-risk use cases where the cost of an error is bounded. Investing early in observability tooling because you cannot govern what you cannot see. Implementing layered evaluation that combines automated checks with periodic human review. Enforcing tool-level permissions at the infrastructure layer, not just in policy documents. And designing explicitly for escalation and fallback, not just for the nominal workflow.

Critically, they treat governance as core infrastructure, not a compliance afterthought. Governance teams are embedded with AI engineering teams from the earliest design stages. Validators are not brought in post-deployment to rubber-stamp a decision already made.

The EY/MIT report finding is instructive: boards at leading institutions are making AI oversight a standing agenda item, and investing in explainability, auditability, and third-party risk controls ahead of regulatory requirements, not in reaction to them. That proactive posture is itself a competitive differentiator.

How ValidMind Supports Agentic AI Governance

ValidMind’s AI governance platform was purpose-built for the challenges financial institutions face with model risk management, and has been extended to support the specific demands of agentic AI governance:

- Automated documentation — generate SR 11-7-aligned model documentation for agentic systems, including multi-agent architectures, tool inventories, and autonomy scope definitions

- Continuous validation — run automated tests in production, not just pre-deployment, with configurable monitoring thresholds aligned to your risk appetite

- Independent validation workflows — structured workflows that keep model developers and validators properly separated, with full audit trails of every review and approval

- Regulatory alignment — built-in templates and test suites aligned to SR 11-7, the FS AI RMF, E-23, SS1/23, and EU AI Act requirements

- Multi-agent inventory — track every agent in your inventory, with named owners, permission scopes, and validation status in a single governance dashboard

See how a Fortune 500 bank accelerated its AI governance program using ValidMind: read the case study →

Frequently Asked Questions: Agentic AI Governance in Financial Services

What is agentic AI governance in financial services?

Agentic AI governance in financial services is the set of policies, controls, monitoring systems, and accountability structures that financial institutions use to oversee autonomous AI systems — agents that plan, decide, and act without direct human intervention at every step. It extends traditional model risk management (SR 11-7) to cover continuous oversight, dynamic evaluation, tiered autonomy controls, and multi-agent accountability chains.

How does agentic AI differ from traditional AI in terms of governance?

Traditional AI models produce outputs that humans review before acting. Agentic AI executes multi-step workflows autonomously — pulling data, making decisions, calling APIs, and iterating toward goals without human-in-the-loop checkpoints at every step. This means governance must shift from pre-deployment testing to continuous monitoring, and from binary controls to tiered, contextual authority frameworks. Errors can propagate across entire workflows before detection.

What regulations apply to agentic AI in financial services?

Key frameworks include: SR 11-7 / OCC 2011-12 (model risk management, extended to AI agents), the new U.S. Financial Services AI Risk Management Framework (FS AI RMF, Feb 2026, 230 control objectives), FINRA’s 2026 Regulatory Oversight Report guidance on AI agents, the EU AI Act (high-risk classification, transparency, human oversight), and the UK’s SS1/23 guidance.

What is multi-agent governance and why does it matter for banks?

Multi-agent governance refers to the policies and controls needed when multiple autonomous AI agents collaborate — delegating tasks, sharing data, and making interdependent decisions. In financial services, a multi-agent system might combine a research agent, a trading-decision agent, and a compliance-check agent. Governance must address inter-agent communication protocols, permission inheritance (can an orchestrator grant sub-agents permissions it doesn’t hold?), accountability chains, complete audit trails across the agent network, and cascading failure containment.

How can ValidMind help with agentic AI governance?

ValidMind’s platform automates the documentation, validation, and continuous monitoring required for SR 11-7-compliant agentic AI governance. It provides automated test generation for agentic systems, structured independent validation workflows, a multi-agent inventory with named owners and permission scopes, and built-in templates aligned to SR 11-7, E-23, SS1/23, and EU AI Act requirements. Learn more at validmind.com/platform/ai-governance/.

What is the biggest risk in agentic AI for financial services?

The biggest risk is autonomy without accountability. Agents optimizing for goal completion without contextual awareness of regulatory constraints, data privacy rules, or financial decisioning boundaries. This can cause errors to cascade across workflows before detection, create audit gaps, and result in regulatory violations. Recent enforcement cases (Two Sigma’s $90M SEC penalty, Brex Treasury’s $900K FINRA fine) demonstrate that algorithmic governance failures carry direct financial consequences. The OWASP Top 10 for LLMs identifies “Excessive Agency” as a top vulnerability specific to agentic systems.

The Bottom Line: Governance Enables Agentic AI

Agentic AI will define the next decade of financial services. According to McKinsey, advanced automation is projected to add between $2.6 trillion and $4.4 trillion annually to the global economy, and financial services is among the highest-potential sectors. The institutions that capture that value will not be the ones that deploy the fastest AI. They will be the ones that deploy the most trusted AI.

The path to responsible agentic AI runs through governance. Not governance as a compliance checkbox that slows you down, but governance as the infrastructure that lets you move fast without breaking things. Build it early. Build it into the system design. And build it on a platform that can scale with the complexity of what’s coming.

📄 Download the full technical brief: Governing Agentic AI in Financial Services — includes a complete governance framework, new testing and evaluation methodologies, multi-agent system guidance, and practical deployment steps.

Inside, you’ll learn:

- A full framework for agentic AI governance

- New testing and evaluation methodologies

- How to manage multi-agent systems

- Practical steps to deploy safely at scale

Final Thought

The autonomous enterprise is coming.

The question is not whether your institution will adopt agentic AI.

It is whether you will govern it well enough to trust it.

Ready to see how ValidMind supports agentic AI governance in practice? Request a demo →