AI Is Rewriting the Rules of Model Risk Management

In 2012, a model risk management failure at JPMorgan Chase cost the bank $6 billion in the London Whale trading scandal, traced back in part to a spreadsheet error that understated risk for months. A decade later, Zillow recorded a $304 million loss when its pricing model failed to adapt to market changes, leading to major layoffs.

These weren’t isolated incidents. They exposed a deeper issue: a breakdown in model risk management, the discipline meant to ensure the models your organization relies on actually perform as expected.

For years, model risk management was built around predictable systems like linear models and rule-based approaches. These models were easier to explain, validate, and monitor. Governance frameworks and regulatory expectations evolved around this level of transparency and control.

AI has changed that entirely. Today’s models, including machine learning and generative systems, are dynamic and often opaque. They evolve with data, behave unpredictably, and can drift in production without immediate detection.

The problem is that governance frameworks, tools, and even internal mindsets have not evolved at the same pace. This creates a growing gap between how models operate and how they are managed.

This blog explores where traditional model risk management falls short in the age of AI, and what a modern, scalable approach needs to look like for organizations aiming to deploy AI responsibly.

The Foundations of Model Risk Management

To understand why AI is straining the current system, you first need to understand what that system was built for and why it worked well for so long.

A Framework Born From Crisis

Before 2008, model risk management in financial services was largely informal. Your institution likely relied on the judgment of individual modelers, internal best practices, and loosely applied conventions. There were no universal standards, no mandatory governance structures, and no regulatory teeth behind the concept of model risk.

The 2008 global financial crisis changed that permanently. Flawed value-at-risk models had contributed to catastrophic miscalculations of systemic risk across the industry. Regulators responded decisively.

In 2011, the US Federal Reserve and the Office of the Comptroller of the Currency jointly issued SR 11-7, a supervisory guidance that set the gold standard for risk management frameworks globally. It introduced requirements for model inventory, independent validation, conceptual soundness review, and structured governance.

Other regulators followed. The UK Prudential Regulation Authority issued SS1/23, Canada adopted the E-23 guidelines, and the European Banking Authority, the Monetary Authority of Singapore, and others introduced their own aligned guidance.

For institutions operating across borders, understanding how SS1/23 MRM principles for banks map to your existing governance structure became an urgent priority.

Regulatory compliance was now a defining expectation, and institutions invested heavily in building out dedicated model risk management teams, second-line validation functions, and third-line audit oversight.

What These Frameworks Assumed

These frameworks were built around a clear and reasonable set of assumptions. Your model lifecycle was expected to be linear and manageable: design a model, develop it, validate it independently, deploy it, and monitor it periodically. Models were expected to have stable inputs, fixed logic, and outputs that could be documented in advance and reproduced consistently.

Model validation under SR 11-7 was primarily retrospective. It checked whether a model was conceptually sound, whether its implementation was accurate, and whether its outputs were reasonable given its intended purpose. The emphasis was on point-in-time assessment, not continuous surveillance.

Regulatory compliance was achievable because model behavior was largely predictable. You could write a model documentation report and have confidence it would remain accurate for the life of the model. You could validate a model once and trust that validation held, unless the model was explicitly changed.

The entire system worked because traditional models were knowable. AI is not.

Why AI Models Are a Different Risk Animal Entirely

The structural differences between traditional statistical models and modern AI systems are not incremental. They are fundamental, and they break nearly every assumption that your existing model risk management framework was built on.

The Black Box Problem

Traditional models, whether linear regressions, decision trees, or credit scorecards, have transparent internal logic. You can trace an output back to its inputs and explain exactly how the model arrived at its conclusion. This explainability is not just a nice-to-have: it is a core requirement under SR 11-7, SS1/23, and virtually every major regulatory framework.

AI models, particularly deep learning systems and large language models, do not offer this transparency. Their internal representations are distributed across millions or billions of parameters.

Even the teams that trained them cannot fully explain why a particular input produced a particular output. This opacity creates a fundamental tension with the explainability requirements embedded in risk management frameworks that your compliance team is already navigating.

The consequences are real. If your credit risk model makes a decision that harms a customer, and a regulator asks you to explain the logic behind that decision, a traditional model gives you a clear answer. A deep learning model may not.

Understanding how GenAI and model risk management best practices intersect is now essential for any institution deploying these systems. ValidMind helps organizations build the documentation infrastructure needed to address explainability requirements even for complex AI systems.

Dynamic Behavior and Model Drift

A traditional model stays fixed unless your team explicitly changes it. An AI model can shift its effective behavior as the data it encounters in production diverges from the data it was trained on.

This phenomenon, commonly referred to as model drift, means that a model that was validated as sound at deployment may quietly become unreliable over time without any explicit change to its code or parameters.

Model drift introduces a category of risk that point-in-time validation simply cannot address. Your validation team may have signed off on a model six months ago, but the model your organization is running in production today may be functionally different from the one that was validated.

Without continuous oversight and real-time model monitoring, that gap is invisible until it produces a failure. Data drift, concept drift, and feature drift all compound this problem. The environment in which your AI models operate is not static, and your risk management frameworks need to reflect that reality.

Scale and Speed Your Organization Was Not Built For

According to McKinsey, the number of models used across financial institutions has been rising by as much as 25% per year. AI development tools like AutoML and MLOps pipelines have compressed development timelines from months to days.

A single data science team can now build, iterate on, and deploy dozens of models in the time it once took to validate one. Traditional model risk management was never designed for this velocity. Validation cycles of 6 to 24 weeks, which ValidMind has consistently observed across institutions, create backlogs that grow faster than they can be cleared.

The result is a growing inventory of AI models that are either waiting in a validation queue or being deployed without adequate oversight. This is not a resourcing problem alone. It is a structural mismatch between the speed of AI development and the pace of model lifecycle governance.

Understanding how AI risk management must evolve to meet this pace is one of the defining challenges for risk officers in 2026.

The Gaps AI Exposes in Legacy Model Risk Management

When you place modern AI systems against the requirements of traditional model risk management, the gaps become visible across documentation, validation speed, monitoring depth, and governance scope.

Static Documentation Cannot Capture Dynamic Systems

SR 11-7 and SS1/23 both require comprehensive model documentation as a foundation of effective governance. The logic is sound: if you cannot document what a model does, you cannot validate it, monitor it, or defend it to a regulator.

The problem is that static documentation, written once at the time of model approval, cannot accurately describe a system whose behavior evolves with its data environment. Your AI model documentation may be technically accurate at the moment it is written and meaningfully outdated three months later.

A model that has drifted in production is a different model from the one your documentation describes, even if nobody has touched its code. Traditional risk management frameworks have no clear answer for this. They were designed for models that stayed put.

Validation Speed Versus AI Development Speed

The validation backlog problem is not new, but AI has made it structurally worse. When your development teams are shipping new models and model updates faster than your validation team can review them, you face an unavoidable choice: slow down development or accept governance gaps.

Neither option is acceptable for institutions trying to stay competitive while maintaining regulatory compliance. This is precisely why scaling model validation in the age of AI has become one of the most pressing operational challenges in financial services today.

ValidMind’s validation automation capabilities are designed specifically to help your team close this gap without adding headcount.

Monitoring That Stops at Deployment

Legacy model risk management frameworks treat post-deployment monitoring as periodic and largely manual. Monthly or quarterly performance reviews made sense when models were stable and model inventories were manageable.

They do not make sense for AI systems that can drift between review cycles and that operate across hundreds of concurrent deployments. Continuous oversight is no longer aspirational. It is a regulatory expectation. The EU AI Act, NIST AI RMF, and updated interpretations of SR 11-7 all signal movement toward mandatory ongoing monitoring for high-risk AI systems.

If your organization’s model monitoring practice still relies on scheduled manual reviews, you are operating behind the curve. Without a centralized model inventory, your organization has no reliable way to know how many models are in production, which ones carry open findings, and which are overdue for review.

Governance Gaps Across the AI System Boundary

Traditional model risk management governs individual models. AI systems are not individual models. They are ecosystems: a foundation model, retrieval components, external data feeds, third-party APIs, orchestration layers, and output guardrails.

Your risk management frameworks define what counts as a model and what falls within the validation scope. For most legacy frameworks, agentic AI systems fall outside those definitions entirely.

This is a structural governance gap, not a judgment call. The tools an AI system uses, the external data it pulls, and the downstream actions it triggers are all sources of risk that traditional MRM oversight does not reach.

What AI Model Risk Management Actually Requires

Acknowledging the gaps is the starting point. The more important question is what your model risk management practice needs to look like to govern AI effectively.

Continuous Validation Embedded Into the Model Lifecycle

Model validation needs to shift from a gate at the end of development to a process that runs in parallel with it. For AI systems, this means embedding automated testing, fairness checks, performance benchmarking, and documentation capture into your MLOps pipelines so that validation evidence accumulates continuously rather than being assembled under pressure before a deployment deadline.

This also means expanding the scope of what validation covers. Behavioral validation, scenario-based testing with adversarial inputs, and alignment assessments are now baseline requirements for AI model risk management, not optional additions.

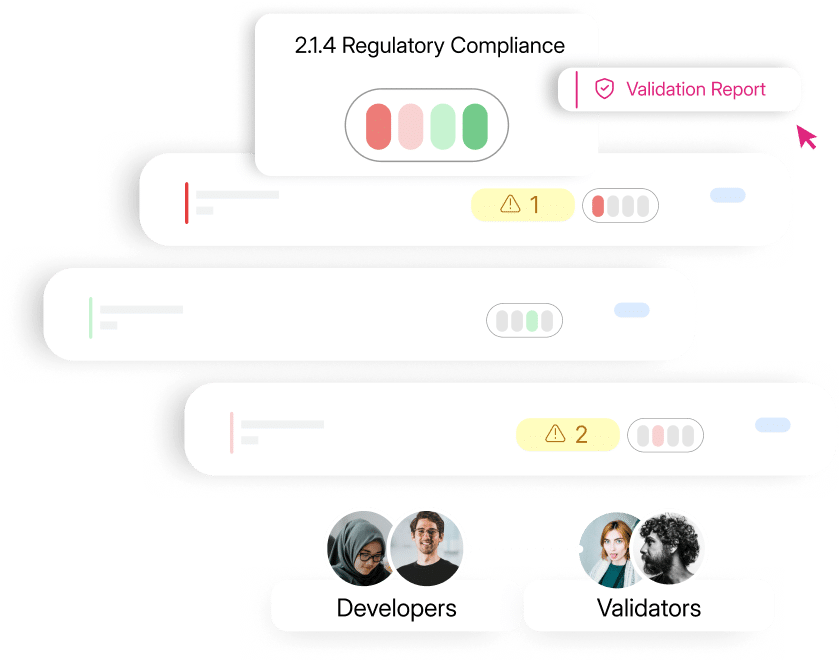

Validation scope must extend to the entire AI system, including its tools, its data sources, and its integration points, not just the model weights at its core. ValidMind creates a direct feedback loop between developers and validators, with compliance status tracked at the section level so gaps are flagged before they become findings.

ValidMind’s developer framework allows your model development teams to run automated tests and generate structured documentation as part of their existing workflow, feeding directly into the validation process without creating additional bottlenecks.

Living Documentation That Reflects Reality

Your model documentation needs to be a living record, not a point-in-time artifact. For AI systems, this means documentation that updates as the model’s configuration, training data, or operational environment changes.

It means capturing data lineage, model architecture rationale, validation methodology, explainability assessments, bias analyses, and monitoring thresholds in a structured format that auditors can interrogate.

Versioning is essential here. When a regulator or internal auditor asks what changed between two versions of a model, and why, your documentation should be able to answer that question immediately and completely. Manual documentation practices simply cannot maintain this level of traceability at AI scale.

ValidMind structures model documentation collaboratively in real time, so every stakeholder from developers to compliance teams works from a single, always-current source of truth.

ValidMind’s platform automates evidence capture and links it to your validation guidelines in real time, so your documentation reflects the current state of your models rather than the state they were in when someone last had time to update a document.

Real-Time Monitoring and Human-in-the-Loop Controls

Continuous oversight in practice means automated drift detection, real-time data quality checks, bias monitoring, and performance tracking running in production across your entire model inventory. For generative AI systems, it also means hallucination detection and output guardrails that prevent harmful or non-compliant outputs from reaching end users.

For high-risk use cases, automated monitoring is necessary but not sufficient. Human-in-the-loop controls are essential for decisions with significant consequences for customers or counterparties. Your risk mitigation framework needs to define clearly where automation ends and human review begins, and that boundary needs to be documented and auditable.

Model monitoring should generate evidence logs, not just internal dashboards. Regulators expect to see documented proof of ongoing oversight, not assurances that oversight is happening somewhere.

ValidMind’s ongoing monitoring surfaces alerts as soon as model behavior shifts, routing them directly to the responsible team members so nothing drifts undetected in production.Aligning With the Evolving Regulatory Landscape

Your organization is not operating in a single regulatory environment. SR 11-7, SS1/23, E-23, the EU AI Act, NIST AI RMF, and ISO 42001 represent a layered and evolving set of expectations that are converging on common themes: risk-tiered oversight, documented validation, explainability requirements, and continuous monitoring for high-risk systems.

Building your AI governance program proactively, before a specific regulatory requirement forces your hand, gives your organization a significant operational advantage.

For organizations evaluating where to start, the five benefits of using ValidMind for MRM cover how purpose-built tooling translates governance requirements into practical, day-to-day efficiency gains for your model risk teams

Institutions that treat regulatory compliance as the floor rather than the ceiling deploy AI faster, audit with less friction, and carry lower regulatory risk over time.

How ValidMind Closes the Gap Between Traditional MRM and AI

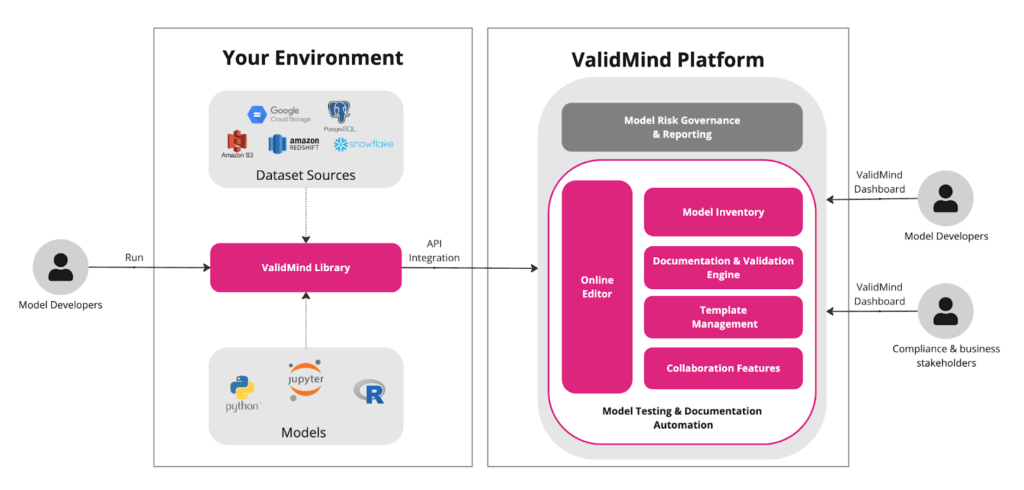

ValidMind is designed for organizations scaling AI under increasing regulatory pressure, growing validation backlogs, and the need to manage model risk beyond what manual processes can handle. As an AI and model risk management platform, it brings documentation, validation, monitoring, and governance into a single, connected workflow.

Instead of treating these as separate functions, ValidMind ensures that development outputs feed directly into validation, and validation continuously informs monitoring and governance. This creates a more streamlined and consistent approach to AI and model risk management.

For your development teams, the platform integrates into existing Python and MLOps environments, allowing automated tests to generate structured documentation and validation evidence during development itself. This reduces friction and removes the need for additional compliance-heavy workflows.

For validation teams, automation significantly reduces manual effort by linking documentation to validation requirements and generating audit-ready reports faster. Your validators still maintain control, but without being slowed down by repetitive documentation tasks.

Risk and compliance teams benefit from centralized model inventory, real-time monitoring, and built-in policy alignment. This makes it easier to manage audits, maintain governance standards, and track models across their lifecycle within a unified system.

A practical example of this can be seen in the General Bank of Canada case study, where a regulated financial institution improved validation efficiency and reduced documentation overhead while scaling its AI governance capabilities.

Whether your organization manages a few models or hundreds, ValidMind scales with your needs without requiring proportional increases in governance effort. This is the shift modern AI and model risk management demands.

Model Risk Management Must Evolve: The Question Is Whether Yours Will

Traditional model risk management was built for a world of static, knowable models. It served that world well. The London Whale and Zillow failures were not failures of MRM as a concept. They were failures of execution, of the tools, processes, and cultures that turned good frameworks into effective governance.

AI does not just demand better execution of traditional MRM. It demands a fundamentally different approach. Your model lifecycle is no longer linear. Your models are no longer stable. Your validation processes can no longer be periodic and manual. And your documentation can no longer be a one-time artifact written at the moment of deployment.

The organizations that will lead in AI are not the ones that deploy the most models. They are the ones that can deploy AI with genuine confidence, because their governance infrastructure has kept pace with their innovation.

Continuous oversight, automated validation, living documentation, and real-time model monitoring are no longer aspirational features of a future MRM program. They are the baseline requirements of governing AI responsibly today.

Regulatory compliance is not the ceiling. It is the floor. The institutions that embed AI governance early will audit with confidence, deploy faster, and build the kind of trust in their AI systems that translates into durable competitive advantage.

The rules of model risk management have been rewritten. The question is whether your framework has kept up.

See How ValidMind Modernizes Your Model Risk Management

ValidMind is purpose-built to close the gap between where traditional model risk management ends and where AI demands your governance begin. From automated model validation and living documentation to real-time model monitoring and audit-ready reporting, ValidMind gives your team the infrastructure to govern AI confidently at scale.

Whether you are a model risk officer managing a growing validation backlog, a chief risk officer building an enterprise AI governance program, or a data science leader trying to deploy models faster without sacrificing oversight, ValidMind fits into your existing workflow and scales with your model inventory.

Schedule a demo today and see how leading financial institutions are using ValidMind to validate faster, monitor continuously, and stay ahead of evolving AI regulations.

Top 10 People Also Ask: FAQs

1. What is model risk management?

Model risk management is the process of identifying, assessing, and monitoring risks from models used in decision-making. It covers the full lifecycle, including development, validation, deployment, and ongoing monitoring. The goal is to ensure models are reliable, compliant, and performing as expected.

2. Why is model risk management important for AI?

AI models are dynamic, harder to explain, and can drift over time, making risks less predictable. Without structured model risk management, your organization may deploy systems that behave unexpectedly in production. This increases exposure to financial, regulatory, and reputational risks.

3. What is SR 11-7 and why does it matter?

SR 11-7 is a regulatory guideline that defines best practices for model risk management in financial institutions. It covers governance, validation, and monitoring requirements. While originally designed for traditional models, it now serves as a foundation for AI model governance.

4. How is AI model risk management different from traditional MRM?

Traditional MRM focuses on stable, explainable models, while AI models are dynamic and less transparent. AI model risk management requires continuous monitoring, lifecycle-based governance, and handling of model drift. It also extends beyond individual models to entire AI systems.

5. What is model drift and why does it matter for risk management?

Model drift occurs when a model’s performance changes due to shifts in data over time. This can lead to inaccurate or biased outputs without obvious warning. Continuous monitoring helps detect drift early and maintain reliable performance.

6. What does continuous model monitoring involve?

Continuous model monitoring tracks performance, data quality, and outputs in real time. It includes drift detection, anomaly alerts, and audit logging. Solutions such as ValidMind help automate monitoring and maintain consistent oversight.

7. What regulatory frameworks govern AI model risk management?

Key frameworks include SR 11-7 (US), SS1/23 (UK), E-23 (Canada), the EU AI Act, and NIST AI RMF. These frameworks emphasize validation, documentation, explainability, and continuous monitoring. Organizations must align governance practices to meet these requirements.

8. What is an AI risk assessment framework?

An AI risk assessment framework evaluates risks associated with AI systems before and after deployment. It considers data quality, model complexity, bias, and regulatory impact. This helps organizations apply appropriate controls based on risk levels.

9. How can financial institutions scale model validation for AI?

Scaling validation requires moving from manual processes to automated workflows integrated into development pipelines. This reduces documentation effort and speeds up validation cycles. Tools such as ValidMind help streamline validation while maintaining audit readiness.

10. What should organizations look for in a model risk management solution?

Organizations should look for capabilities that cover the full lifecycle, including documentation, validation, monitoring, and audit tracking. Automation, integration, and scalability are key factors. ValidMind supports these requirements by enabling structured and consistent model risk management.