How AI Governance Monitoring Improves Model Risk Oversight

AI governance monitoring is becoming essential as enterprises rapidly expand their use of AI across business functions. As models scale, so do risks tied to compliance, performance, and decision-making accuracy. While many organizations establish strong AI governance frameworks, they often struggle with maintaining continuous oversight after deployment.

This is where AI governance monitoring plays a critical role. It ensures models stay aligned with regulatory standards, internal policies, and defined risk thresholds. By enabling AI lifecycle monitoring and strengthening model risk oversight, it helps teams detect issues early and maintain responsible AI oversight across evolving environments.

In many cases, organizations only realize the importance of AI governance monitoring after facing performance drops or compliance gaps in production. According to a recent report, only 24% of organizations have operationalized AI governance across their models, highlighting a major gap between adoption and oversight.

Models that once performed well can drift over time due to changing data patterns, making continuous monitoring essential for maintaining reliability and trust.

Without AI governance monitoring, gaps can quickly emerge, leading to compliance failures or unexpected model behavior. This growing urgency is also reflected in discussions around understanding the urgency of robust AI governance.

What Is AI Governance Monitoring?

AI governance monitoring plays a critical role in ensuring that AI systems remain reliable, compliant, and aligned with business objectives over time. As organizations scale AI adoption, continuous oversight becomes necessary to maintain control and reduce risk.

Understanding how AI governance monitoring works helps teams move from static policies to ongoing, real-world enforcement.

Definition of AI Governance Monitoring

AI governance monitoring refers to the continuous oversight of AI systems to ensure they operate in line with defined AI governance policies, regulatory expectations, and internal standards.

In practice, AI governance monitoring covers multiple layers of control, including:

- Model performance tracking over time

- Ongoing model validation monitoring

- Documentation completeness and audit readiness

- Alignment with evolving regulatory requirements

Rather than a one-time checkpoint, AI governance monitoring acts as a continuous feedback loop that supports AI compliance monitoring and strengthens enterprise AI risk management.

Difference Between AI Governance and AI Governance Monitoring

It’s easy to confuse governance with monitoring, but they serve different purposes.

AI governance focuses on defining the structure:

- Policies

- Frameworks

- Standards

AI governance monitoring, on the other hand, ensures those structures are actually followed:

- Real-time oversight of deployed models

- Enforcement of governance policies

- Continuous validation and compliance checks

In simple terms, AI governance sets the rules, while AI governance monitoring ensures those rules are consistently applied through continuous AI governance monitoring.

Why Monitoring Is Essential for Enterprise AI Governance

AI systems are not static. Models evolve as new data flows in, making AI governance monitoring critical for long-term reliability.

Key challenges include:

- Data drift and concept drift impacting model accuracy

- Frequent updates or retraining cycles

- Changing regulatory expectations

Without AI governance monitoring, governance becomes a one-time activity instead of an ongoing process. Continuous monitoring ensures models remain aligned with AI enterprise governance goals and maintain consistent responsible AI oversight across their lifecycle.

The Growing Importance of Model Risk Oversight

Model risk oversight has become a critical focus as AI systems increasingly influence high-stakes decisions. As models grow more complex and dynamic, the risk of errors, bias, and compliance gaps rises significantly.

This makes continuous AI governance monitoring essential to maintain control, visibility, and trust in AI-driven outcomes.

What Is Model Risk?

Model risk refers to the possibility that AI systems produce incorrect, biased, or unreliable outputs. Even high-performing models can introduce hidden risks over time.

The potential impact is significant:

- Financial losses due to incorrect predictions

- Regulatory violations from non-compliant decisions

- Reputational damage that affects customer trust

This is why model risk oversight is becoming a core priority within AI governance models.

Why AI Systems Introduce New Risk Challenges

AI-driven systems bring complexities that traditional systems don’t. These include:

- Black-box models with limited explainability

- Continuous retraining that changes model behavior

- Heavy reliance on large and evolving datasets

- Dynamic, real-time decision-making environments

These factors make AI governance monitoring essential for maintaining visibility and control. Without it, risks can go unnoticed until they escalate.

Regulatory Focus on Model Risk Management

Regulators now expect organizations to demonstrate structured and ongoing enterprise AI risk management practices. This includes:

- Defined model validation processes

- Continuous AI governance monitoring

- Documented governance and compliance workflows

Adopting solutions aligned with AI model risk management helps organizations operationalize these requirements while strengthening overall AI governance monitoring and compliance readiness.

Key Components of AI Governance Monitoring

AI governance monitoring relies on a few core components that ensure models remain compliant, reliable, and aligned with business and regulatory expectations. These components help organizations move from static governance frameworks to continuous, real-time oversight across the AI lifecycle.

Continuous AI Lifecycle Monitoring

AI governance monitoring should not stop at deployment. It needs to span the entire lifecycle of an AI system to ensure consistent control and visibility.

This includes monitoring across:

- Model development

- Validation phases

- Deployment environments

- Post-deployment performance

By embedding AI governance monitoring at each stage, organizations can enforce policies continuously rather than treating governance as a checkpoint. This approach strengthens AI lifecycle monitoring and ensures effective model validation monitoring beyond initial approvals.

In real-world scenarios, teams often discover that models performing well during testing behave differently in production. Continuous AI governance monitoring helps detect such gaps early and supports stronger model risk oversight.

ValidMind embeds model testing directly into the development workflow, running automated checks and flagging errors before they reach production, so your teams catch issues at the source rather than after deployment.

Risk-Based Monitoring Framework

Not all models carry the same level of risk. A strong AI governance monitoring strategy prioritizes oversight based on impact and exposure.

Organizations typically assess risk based on:

- Model impact on business decisions

- Regulatory exposure and compliance requirements

- Whether the model is customer-facing or internal

- Financial and operational risk levels

This risk-based approach allows teams to allocate resources efficiently while strengthening enterprise AI risk management. High-risk models receive deeper AI governance monitoring, while lower-risk systems are monitored proportionally.

Such prioritization is essential for scaling AI enterprise governance without overwhelming teams.

Compliance and Policy Monitoring

A key function of AI governance monitoring is ensuring that AI systems consistently follow defined rules and standards.

Monitoring must validate adherence to:

- Internal AI governance policies

- Industry regulations and compliance frameworks

- Ethical and AI governance models aligned with responsible AI principles

This is where AI compliance monitoring becomes critical. Instead of relying on periodic audits, AI governance monitoring enables continuous checks, reducing the risk of violations and ensuring ongoing responsible AI oversight.

ValidMind evaluates each test result in real time, flagging what requires attention and providing AI-assisted explanations so your compliance teams always have the context they need to act quickly.

For organizations operating in regulated industries, this level of monitoring is no longer optional. It is a baseline expectation.

Model Documentation and Validation Tracking

Strong AI governance monitoring also requires structured tracking of documentation and validation processes. Without proper records, demonstrating compliance becomes difficult.

Key elements include:

- Validation artifacts and testing results

- Updated model documentation

- Approval workflows across teams

- Audit-ready records for regulatory reviews

Modern AI governance software and AI governance platforms help centralize these activities, making it easier to maintain consistency and transparency.

By integrating documentation tracking into AI governance monitoring, organizations can ensure that every model remains explainable, traceable, and aligned with governance standards over time.

Challenges Organizations Face in AI Governance Monitoring

Even with defined AI governance frameworks, many organizations struggle to implement effective AI governance monitoring in practice. These challenges often limit visibility, slow down processes, and weaken overall model risk oversight.

Lack of Centralized AI Model Inventory

One of the biggest gaps in AI governance monitoring is the absence of a centralized view of all models.

Many organizations lack clarity on:

- How many models are currently in use

- Where those models are deployed

- Who is responsible for each model

Without this visibility, AI governance monitoring becomes fragmented. It also increases the risk of unmanaged models operating outside AI enterprise governance controls, making enterprise AI risk management more difficult.

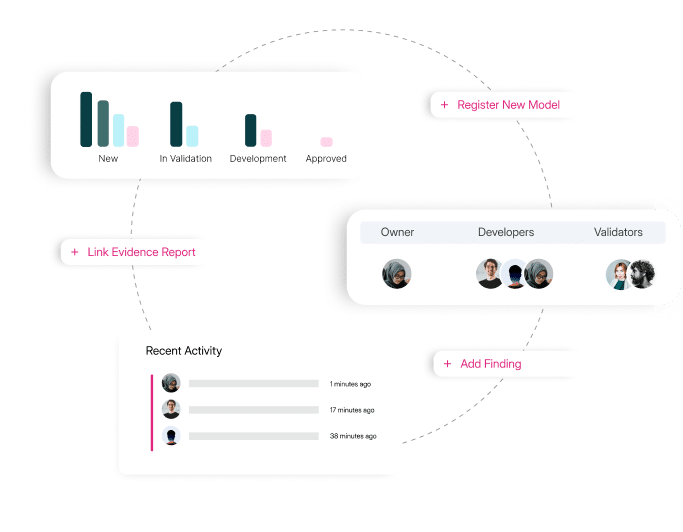

A centralized model inventory gives your organization a single place to register models, assign ownership, link evidence, track findings, and monitor recent activity across every team simultaneously.

Manual Governance Processes

In many teams, AI governance monitoring still relies on outdated, manual workflows.

Common issues include:

- Heavy dependence on spreadsheets

- Manual approval processes

- Disconnected tools and systems

These approaches slow down decision-making and make AI compliance monitoring inconsistent. As the number of models grows, manual processes simply don’t scale, creating gaps in continuous AI governance monitoring.

Limited Visibility Across the AI Lifecycle

Another key challenge is the lack of end-to-end visibility.

Teams often struggle to track:

- Model updates and changes

- Retraining cycles

- Performance degradation over time

Without proper AI lifecycle monitoring, it becomes difficult to maintain strong model validation monitoring and ensure consistent responsible AI oversight. This directly impacts the effectiveness of AI governance monitoring.

How AI Governance Platforms Enable Effective Monitoring

AI governance monitoring becomes far more scalable and reliable when supported by the right technology. Modern AI governance platforms and AI governance software help organizations move from reactive processes to structured, continuous oversight.

Centralized Governance Infrastructure

A centralized AI governance system allows organizations to manage all models within a single environment.

This enables teams to:

- Track every model in one place

- Enforce AI governance models consistently

- Maintain continuous AI governance monitoring across the organization

With centralized infrastructure, organizations gain better control and visibility, strengthening overall AI enterprise governance.

Automated Risk Monitoring Workflows

Automation plays a key role in scaling AI governance monitoring.

Modern platforms enable:

- Automated alerts for model deviations

- Monitoring triggers based on risk thresholds

- Built-in governance review checkpoints

These capabilities reduce manual effort while improving consistency. As a result, teams can focus more on decision-making rather than operational overhead, improving enterprise AI risk management.

Integrated Model Risk Management

AI governance monitoring becomes more effective when tightly integrated with risk management systems.

By aligning governance with AI model risk management, organizations can:

- Strengthen model risk oversight

- Connect validation processes with ongoing monitoring

- Ensure continuous compliance across the AI lifecycle

This integration creates a unified approach where AI governance monitoring, validation, and risk management work together, enabling more reliable and scalable AI operations.

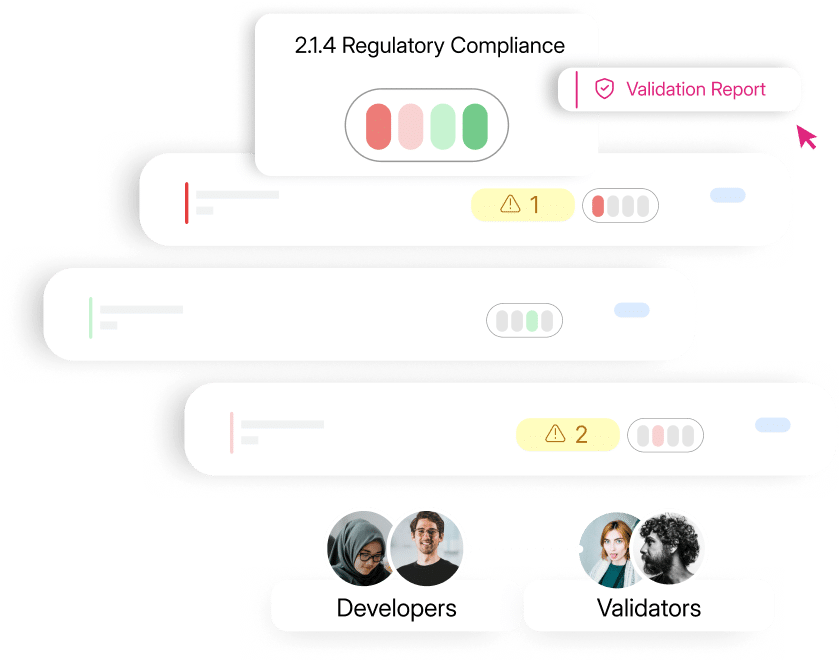

When compliance status is tracked at the section level and shared between developers and validators in real time, your teams resolve findings faster and maintain a continuous audit-ready record without manual coordination.

How ValidMind Supports AI Governance Monitoring

Organizations looking to scale AI governance monitoring need systems that bring structure, visibility, and consistency across the AI lifecycle. This is where platforms like ValidMind help bridge the gap between governance strategy and execution.

Centralized AI Governance Platform

ValidMind offers a unified AI governance environment that simplifies how teams manage and monitor AI systems.

With a centralized approach, organizations can:

- Manage end-to-end AI governance processes in one place

- Continuously track model compliance through AI governance monitoring

- Maintain audit-ready documentation aligned with regulatory expectations

This level of centralization strengthens AI enterprise governance while making AI compliance monitoring more consistent and scalable.

ValidMind gives your risk officers a live view of every model in the inventory, with findings categorized by total, open, and closed status and production models flagged for immediate attention.

End-to-End Model Risk Oversight

ValidMind’s AI model risk management capabilities extend AI governance monitoring into deeper risk-focused oversight.

Organizations can:

- Oversee model validation workflows with structured model validation monitoring

- Track performance across the lifecycle using AI lifecycle monitoring

- Maintain transparency across teams and stakeholders

This integrated approach improves model risk oversight and supports stronger enterprise AI risk management practices.

Seamless Governance Integrations

Modern AI governance monitoring depends on how well systems connect across the enterprise. Modern AI governance monitoring depends heavily on how well systems integrate across the enterprise.

Strong end-to-end AI governance integrations improve data flow, reduce silos, and enable better coordination between teams responsible for AI governance, risk, and compliance.

These integrations help improve:

- Monitoring workflows by reducing manual handoffs

- Cross-team collaboration between data science, risk, and compliance teams

- Enforcement of AI governance models across systems

As a result, continuous AI governance monitoring becomes more practical and less resource-intensive.

Best Practices for Implementing AI Governance Monitoring

Establish a Clear AI Governance Framework

Effective AI governance monitoring starts with a well-defined foundation.

Organizations should clearly define:

- Governance responsibilities across teams

- Monitoring policies and control mechanisms

- Oversight committees for accountability

This ensures that AI governance is structured and aligned with broader AI enterprise governance goals.

Implement Continuous Monitoring Processes

Many organizations still rely on periodic reviews, which are no longer sufficient.

Shifting to continuous AI governance monitoring allows teams to:

- Detect issues in real time

- Maintain consistent AI compliance monitoring

- Strengthen ongoing responsible AI oversight

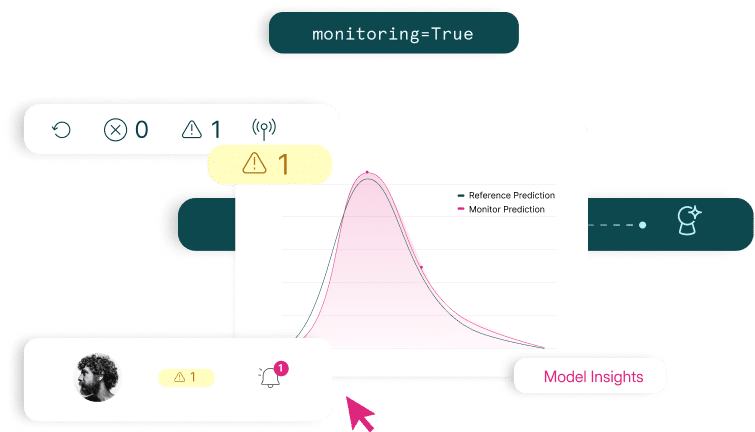

ValidMind’s monitoring engine compares reference predictions against live model outputs in real time, surfacing drift signals and routing alerts to your team the moment performance begins to deviate.

This transition is key to improving both reliability and model risk oversight.

Use AI Governance Platforms to Scale Monitoring

As AI adoption grows, manual processes cannot keep up.

Using AI governance platforms and AI governance software helps organizations:

- Improve visibility across all models

- Detect risks earlier through automated monitoring

- Streamline compliance tracking and reporting

Scaling AI governance monitoring effectively depends on how well governance is built into day-to-day workflows, ensuring that controls do not slow down innovation.

A structured approach to scaling AI governance without slowing down AI helps organizations maintain speed while strengthening AI enterprise governance and overall enterprise AI risk management.

Align Governance Monitoring with Business Risk

Not all AI systems carry the same level of risk.

Organizations should align AI governance monitoring priorities with:

- Operational risk levels

- Financial exposure

- Regulatory obligations

By doing so, teams can focus efforts where they matter most, strengthening enterprise AI risk management while ensuring AI governance monitoring remains both efficient and impactful.

The Future of AI Governance Monitoring

AI governance monitoring will become increasingly critical as AI adoption continues to expand across industries. As more business decisions rely on AI systems, the need for structured and scalable AI governance monitoring will only grow stronger.

At the same time, regulatory expectations are rising. Organizations are now expected to demonstrate continuous AI compliance monitoring, clear documentation, and strong model risk oversight practices.

Looking ahead, AI governance monitoring will evolve to focus on:

- Continuous, real-time oversight across the AI lifecycle

- Explainability tracking to support responsible AI oversight

- Automated regulatory reporting and audit readiness

To meet these demands, organizations will need advanced AI governance platforms and AI governance software that support continuous AI governance monitoring at scale.

Ultimately, AI governance monitoring will become a core capability within AI enterprise governance, enabling organizations to manage complexity while maintaining trust, transparency, and control.

Conclusion

AI governance monitoring is essential for maintaining strong model risk oversight in today’s rapidly evolving AI landscape. As AI systems grow more complex, organizations must move beyond static AI governance policies and adopt continuous, lifecycle-based monitoring.

By implementing structured AI governance monitoring, businesses can improve visibility, strengthen enterprise AI risk management, and ensure consistent AI compliance monitoring. Centralized platforms that combine governance and risk oversight make it easier to maintain documentation, enforce policies, and scale AI responsibly.

Organizations looking to strengthen AI governance monitoring can consider solutions like ValidMind, which support centralized workflows, AI governance models, and end-to-end oversight. Scheduling a demo can help your team understand how to operationalize governance while maintaining transparency and control across AI systems.

AI Governance Monitoring FAQs

1. What is AI governance monitoring?

AI governance monitoring refers to the continuous oversight of AI systems to ensure they follow governance policies, regulatory requirements, and organizational risk controls. It involves tracking model performance, validation compliance, documentation updates, and operational risk throughout the AI lifecycle.

2. Why is AI governance monitoring important for enterprises?

AI governance monitoring helps enterprises detect model drift, maintain compliance, and ensure AI systems operate within approved risk limits. Continuous monitoring reduces operational risk, supports regulatory reporting, and ensures that AI models remain aligned with business and ethical standards.

3. How does AI governance monitoring improve model risk oversight?

AI governance monitoring strengthens model risk oversight by continuously evaluating model performance, detecting anomalies, and enforcing governance controls. It allows organizations to identify risks early, maintain audit-ready documentation, and ensure AI systems remain compliant throughout their lifecycle.

4. What are the key components of AI governance monitoring?

Key components include AI lifecycle monitoring, model validation tracking, compliance monitoring, documentation management, and risk-based oversight. These elements help organizations maintain visibility across AI systems and ensure governance policies are enforced consistently.

5. What challenges do organizations face with AI governance monitoring?

Many organizations struggle with fragmented governance processes, lack of centralized model inventories, manual documentation tracking, and limited visibility into deployed AI models. Without structured monitoring systems, it becomes difficult to maintain consistent oversight and regulatory compliance.

6. How does AI governance monitoring support regulatory compliance?

AI governance monitoring helps organizations demonstrate compliance with regulatory standards by maintaining clear validation records, monitoring model behavior, and ensuring governance policies are enforced consistently across AI systems.

7. What role do AI governance platforms play in monitoring?

AI governance platforms provide centralized infrastructure for monitoring AI models, managing documentation, and enforcing governance workflows. These platforms help organizations automate oversight processes and maintain transparency across the AI lifecycle.

8. How is AI governance monitoring different from model validation?

Model validation evaluates whether a model performs correctly before deployment, while AI governance monitoring ensures the model continues operating within risk and compliance standards after deployment.

9. What industries benefit most from AI governance monitoring?

Highly regulated industries such as banking, financial services, healthcare, and insurance benefit most from AI governance monitoring because they must meet strict regulatory and risk management requirements.

10. How can organizations implement AI governance monitoring effectively?

Organizations can implement AI governance monitoring by establishing governance policies, maintaining centralized model inventories, implementing lifecycle monitoring, and using AI governance platforms that automate oversight and compliance workflows.