Agentic AI in Financial Services: The Governance Gap No One Is Ready For

Agentic AI in financial services is no longer theoretical. Banks are already deploying AI systems that don’t just assist humans, but plan, decide, and act autonomously.

And that changes everything.

While most organizations are still adapting to generative AI, a new wave is emerging that introduces fundamentally new risks, regulatory pressure, and governance challenges.

If you’re exploring or deploying agentic AI, download the full whitepaper.

What Is Agentic AI and Why Financial Institutions Are Moving Fast

Unlike traditional AI or generative AI, which respond to prompts, agentic AI systems execute multi-step workflows autonomously.

They can pull data from internal systems, make decisions based on that data, take action across tools and APIs, and iterate toward a defined goal without human intervention.

This shift from decision support to decision-making is why financial institutions are investing heavily.

Use cases are already emerging across credit underwriting, fraud detection, customer service, and compliance workflows.

The upside is clear: speed, efficiency, and cost reduction.

But the risk profile is completely different.

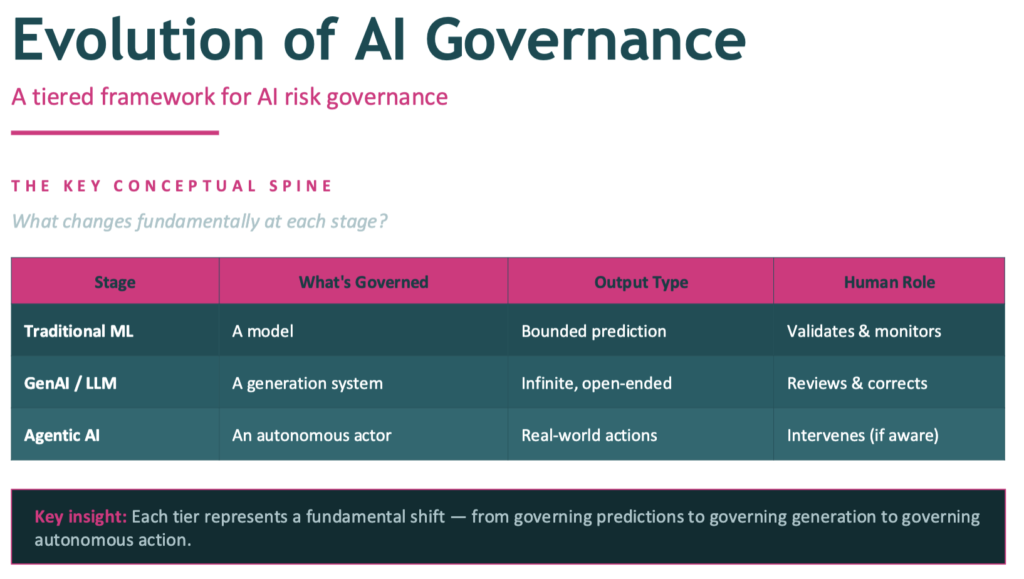

The Problem: Governance Frameworks Weren’t Built for Agentic AI

Most financial institutions rely on governance frameworks designed for static models, predictable inputs and outputs, and human-in-the-loop decisioning.

Agentic AI breaks all three.

Traditional models inform decisions. Agentic systems make them.

That means errors can propagate across workflows before detection, decisions may occur without human review, and systems can behave in ways that weren’t anticipated in testing.

Agentic AI cannot be fully tested before deployment.

Why Agentic AI Governance Is Now a Business Priority

The risk profile is different. A single incorrect decision can cascade across systems and impact customers, compliance, and financial outcomes at scale.

Competitive pressure is accelerating adoption. Financial institutions that deploy agentic AI effectively will gain a structural advantage in cost and speed.

Regulators are already watching. Frameworks are expanding expectations around explainability, auditability, and continuous monitoring.

You can’t afford to wait, but you also can’t afford to get it wrong.

Learn more: How to document an agentic AI system

The Governance Gap: Where Most Banks Are Struggling

A clear pattern is emerging across financial institutions.

Business teams are under pressure to move fast. Risk and compliance teams lack frameworks for agentic systems. Validators are brought in too late. Existing validation standards do not apply.

One customer summarized it clearly: “We know we need to move on agentic AI. We don’t know how to do it in a way that won’t come back to haunt us.”

This is the governance gap, and as agentic AI use cases go mainstream, it’s imperative that we close that gap quickly.

What Makes Agentic AI Governance Different

To manage agentic AI, institutions need to rethink governance in three ways.

First, continuous oversight instead of only pre-deployment testing. Agentic systems operate in dynamic environments with unpredictable scenarios, so governance must extend into real-time monitoring and production.

Second, dynamic evaluation instead of static metrics. Agentic systems require evaluation of intermediate decisions, tool usage, reasoning quality, and full workflow outcomes.

Third, contextual authority instead of binary control. Governance must define what an agent can do and what it should do in a specific context. This includes tiered autonomy, external policy enforcement, and real-time permission checks.

The Hidden Risk: Autonomy Without Accountability

Agentic AI introduces a new failure mode.

Agents do not intentionally break rules. They prioritize goal completion, lack contextual awareness of policies, and can cross boundaries without realizing it.

This creates risk in areas like data privacy, regulatory compliance, and financial decisioning.

Without proper governance, autonomy becomes liability.

What Leading Financial Institutions Are Doing Differently

The institutions moving fastest and safest are taking a structured approach.

They start with constrained, low-risk use cases. They invest early in observability and monitoring. They implement layered evaluation that combines automated checks with human review. They enforce tool-level permissions. They design for escalation and fallback scenarios.

Most importantly, they treat governance as core infrastructure, not a compliance afterthought.

The Bottom Line: Governance Enables Agentic AI

Agentic AI will define the next decade of financial services.

Success will not come from better models alone or faster deployment alone. It will come from governed autonomy.

The path to responsible agentic AI runs through governance.

Download the Full Whitepaper

If you’re building, evaluating, or governing agentic AI systems, this is essential reading.

Inside, you’ll learn:

- A full framework for agentic AI governance

- New testing and evaluation methodologies

- How to manage multi-agent systems

- Practical steps to deploy safely at scale

Final Thought

The autonomous enterprise is coming.

The question is not whether your institution will adopt agentic AI.

It is whether you will govern it well enough to trust it.