Announcing Our Closed Beta for Large Language Model Testing — Coming Soon!

Understanding the risks linked to large language models (LLMs) and generative AI technologies is a complex challenge. Existing model risk frameworks often don’t capture issues like truthfulness or hallucinations. Similarly, the tools you have today simply aren’t robust enough to handle the testing and documenting of the data and language that LLMs produce. This technological gap creates a hurdle for developers, making it hard to create documentation and ensure compliance, hindering the path to production.

We believe there is a better, easier way. To help organizations address the risks and regulatory requirements, and to reap the full benefits of AI, we’re thrilled to announce our first closed beta for model testing and documentation generation geared specifically towards large language models!

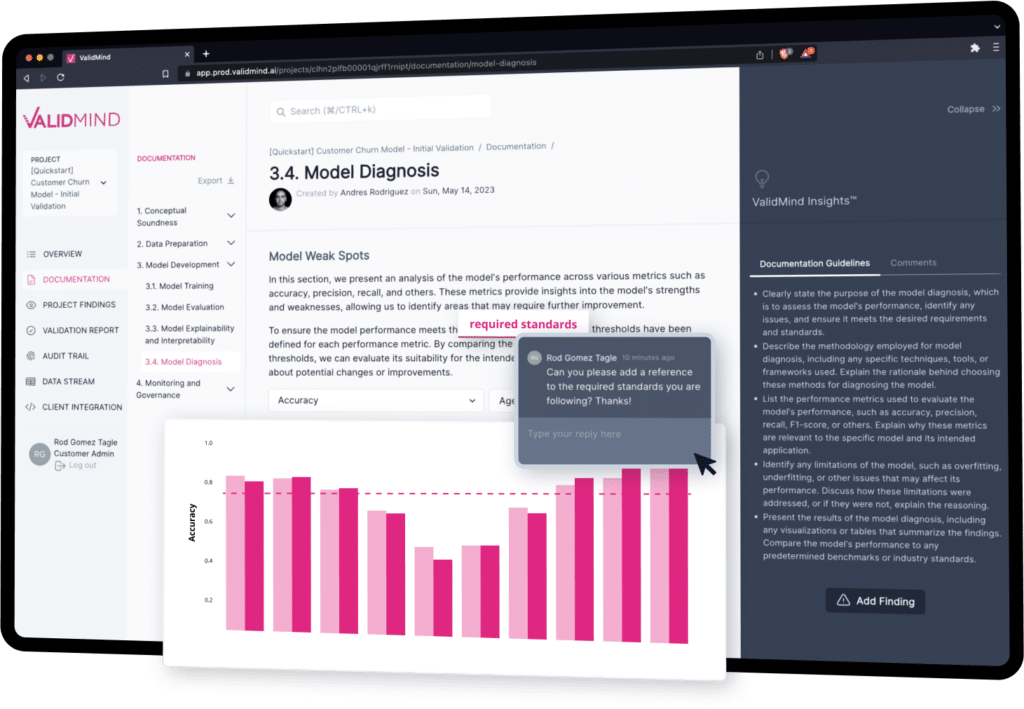

What is ValidMind?

The ValidMind platform is a suite of tools helping developers, data scientists and risk & compliance stakeholders identify potential risks in their AI and large language models, and generate robust, high-quality model documentation that meets regulatory requirements. The platform is adept at handling LLM use cases, including models compatible with the Hugging Face Transformers API, and GPT 3.5, GPT 4, and hosted LLama2 and Falcon-based models (focused on text classification and text summarization use cases).

In addition to LLMs, ValidMind can also handle testing and documentation generation for a wide variety of models, including:

- Traditional machine learning models (ML), such as tree-based models and neural networks

- Natural language processing models (NLP)

- Traditional statistical models, such as OLS regression, logistic regression, time series, etc.

- And many more model types

What sets ValidMind apart is its focus on simplifying complex tasks for both model developers and validators. By automating critical aspects of the model lifecycle, such as documentation, validation, and testing, we enable model developers to concentrate on building better models.

What’s in the closed beta?

We plan to make most of our large language model (LLM) testing and documentation generation features available for you to test during the closed beta. This includes both the ValidMind Model Documentation Automation and AI Risk Management platform.

ValidMind Model Documentation Automation

Our Model Documentation Automation provides a developer framework, a library of tools and methods, to automate generating model documentation and running model validation tests. The framework is designed to be platform agnostic and to integrate with your existing development environment.

ValidMind AI Governance and Risk Management Platform

Our platform isn’t just about automation, it’s also about collaboration. We make it easy for model developers, model validators, and other risk & compliance stakeholders to collaborate and share information at each stage of the model documentation and validation process. Our model inventory covers the full model lifecycle.

Here’s what you can do with our easy-to-use web interface:

- Create customized workflows for managing model documentation and validation with the model inventory

- Create and organize templates to automate model documentation for different models

- Review and edit documentation and test results generated with the ValidMind Developer Framework to ensure they meet requirements and accurately reflect all the necessary data

- Collaborate with other model developers and validators to capture their feedback and findings

- Export documentation in easily shareable formats, such as Word and PDF

Closed beta for LLM testing

Ready to join us on this exciting adventure? Sign up for the waitlist to take the first step in exploring all that ValidMind has to offer. You should be able to expect the the closed beta to go live early Fall 2023.